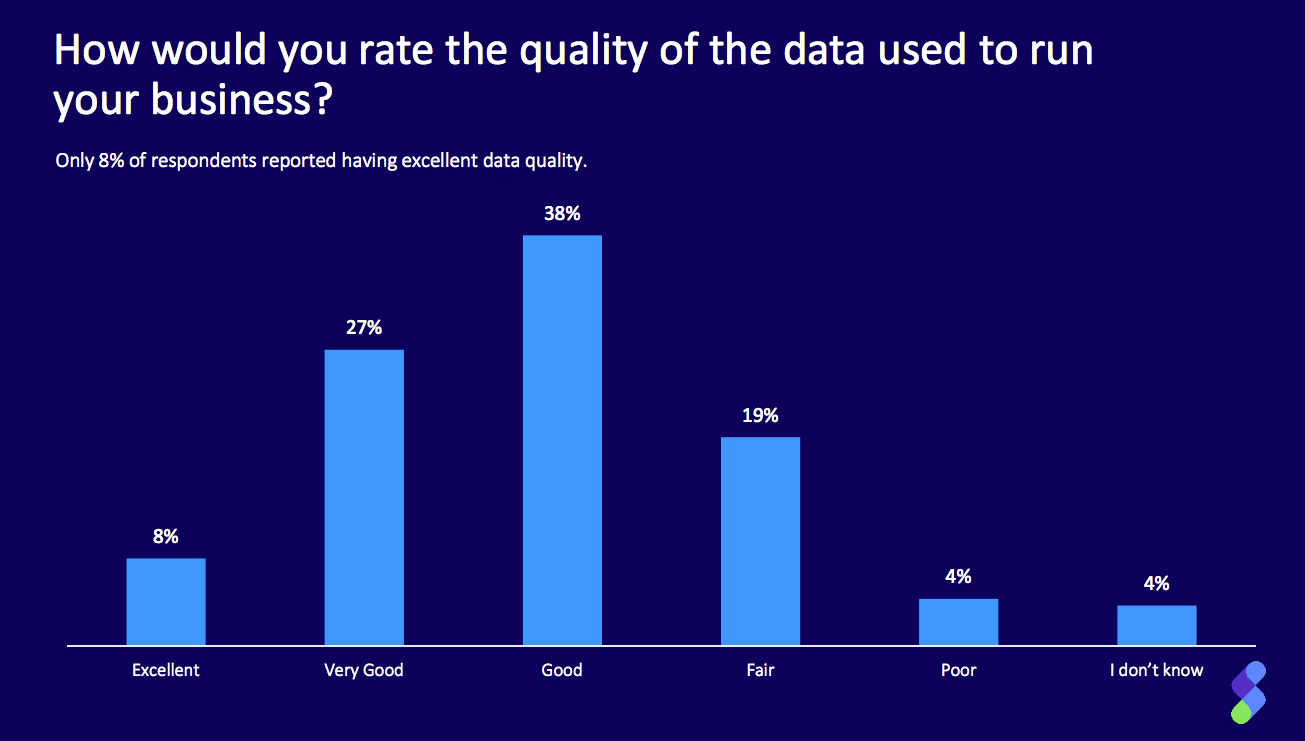

A new study from data software firm Syncsort explores data quality and organizations’ confidence in data across their enterprise. Though most respondents rated their organization’s data quality either as “good” (38 percent) or “very good” (27 percent), the survey revealed a disconnect around understanding and trust in the data—and how it informs business decisions.

Sixty-nine percent of respondents stated their leadership trusts data insights enough to inform business decisions, yet they also said only 14 percent of stakeholders had a very good understanding of the data. Of the 27 percent who reported sub-optimal data quality, 72 percent said it negatively impacted business decisions.

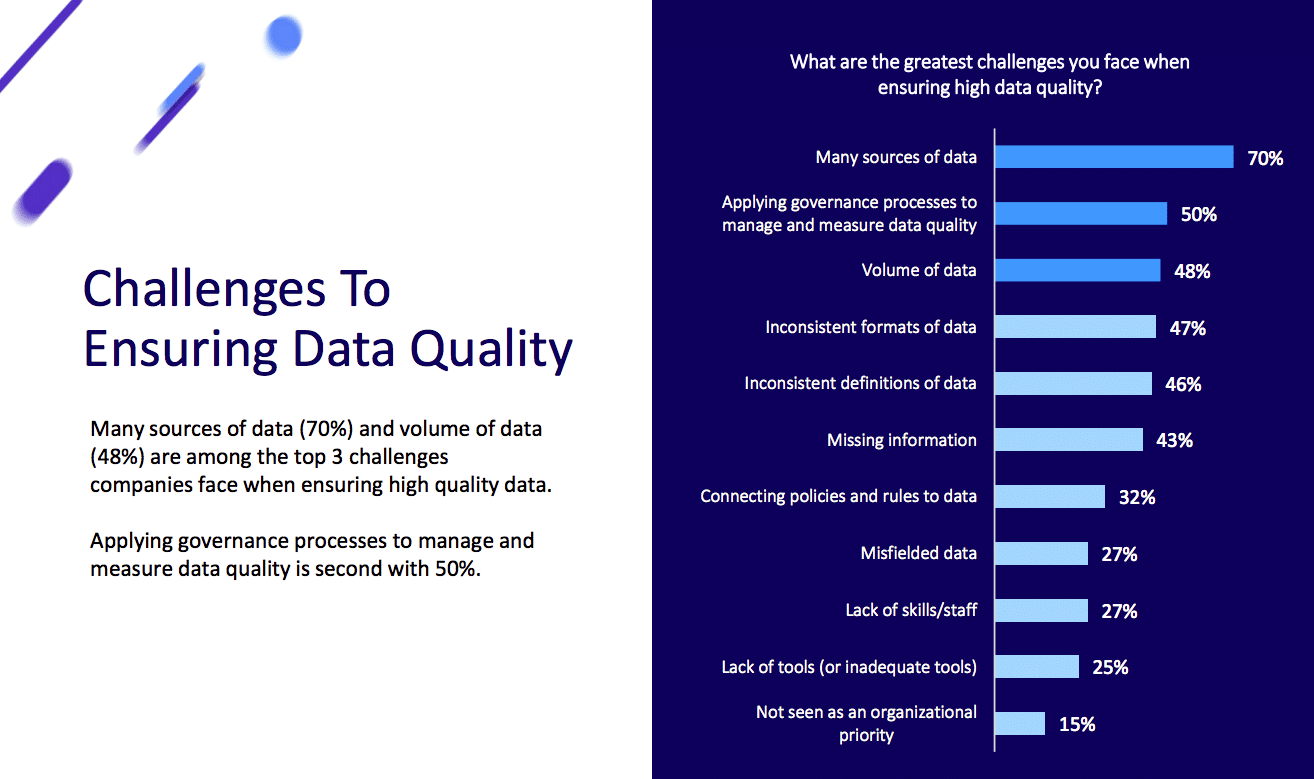

Multiple Data Sources, Governance and Volume Are Top Data Quality Challenges

- The top three challenges companies face when ensuring high quality data are multiple sources of data (70 percent), applying data governance processes (50 percent) and volume of data (48 percent).

- About three quarters (78 percent) have challenges profiling or applying data quality to large data sets.

- Twenty-nine percent say they have a partial understanding of the data that exists across their organization, while 48 percent say they have a good understanding.

Data Profiling Tool Adoption Low, Leading to Lack of Visibility into Data Attributes

- Fewer than 50 percent of respondents take advantage of a data profiling tool or data catalog.

- Instead, respondents rely on other methods to gain understanding of data, with more than 50 percent using SQL queries and over 40 percent using a BI tool. Database administrators often use tools like MySQL explain to analyze query performance and understand data access patterns, which helps identify inefficient queries that could impact data quality assessments.

- Of those who reported partial, minimal or very little understanding of their data, the top three attributes respondents lacked visibility into were: relationship between data sets (63 percent), completeness of data (56 percent) and validation of data against defined rules (56 percent).

The Consequences of Poor Data Quality Are Wide-Ranging, from Customer Dissatisfaction to Barriers to Emerging Technology Adoption

- Of those who reported fair or poor data quality, wasted time was the number one consequence (92 percent), followed by ineffective business decisions (72 percent) and customer dissatisfaction (67 percent).

- Twenty-five percent of respondents who reported sub-optimal data quality say it has prevented their organization from adopting emerging technology and methods (such as AI, Machine Learning (ML) and blockchain).

- Only 16 percent of respondents are confident they aren’t feeding bad data into AI and ML applications.

- Seventy-three percent are using cloud computing for strategic workloads, but 48 percent of them have partial to no understanding of the data that exists in the cloud. Twenty-two percent rate the quality of their data in the cloud as fair or poor.

“This survey confirms what we’ve been seeing with our customers—that good data simply isn’t good enough anymore,” said Tendü Yoğurtçu, CTO at Syncsort, in a news release. “Sub-optimal data quality is a major barrier, especially to the successful, profitable use of artificial intelligence and machine learning. The classic phrase ‘garbage-in, garbage-out’ has long been used to describe the importance of data quality, but it has a multiplier effect with machine learning—first in the historical data used to train the predictive model, and second in the new data used by that model to make future decisions.”

Get more info and download the report here.

Syncsort polled 175 respondents, 69 percent of whom work for organizations with over 1,000 employees. Participants represented a range of industries, with the largest percentage coming from Financial Services (25%), as well as a range of positions, ranging from CDO to Data Analyst, with the majority in data-focused roles (29%).