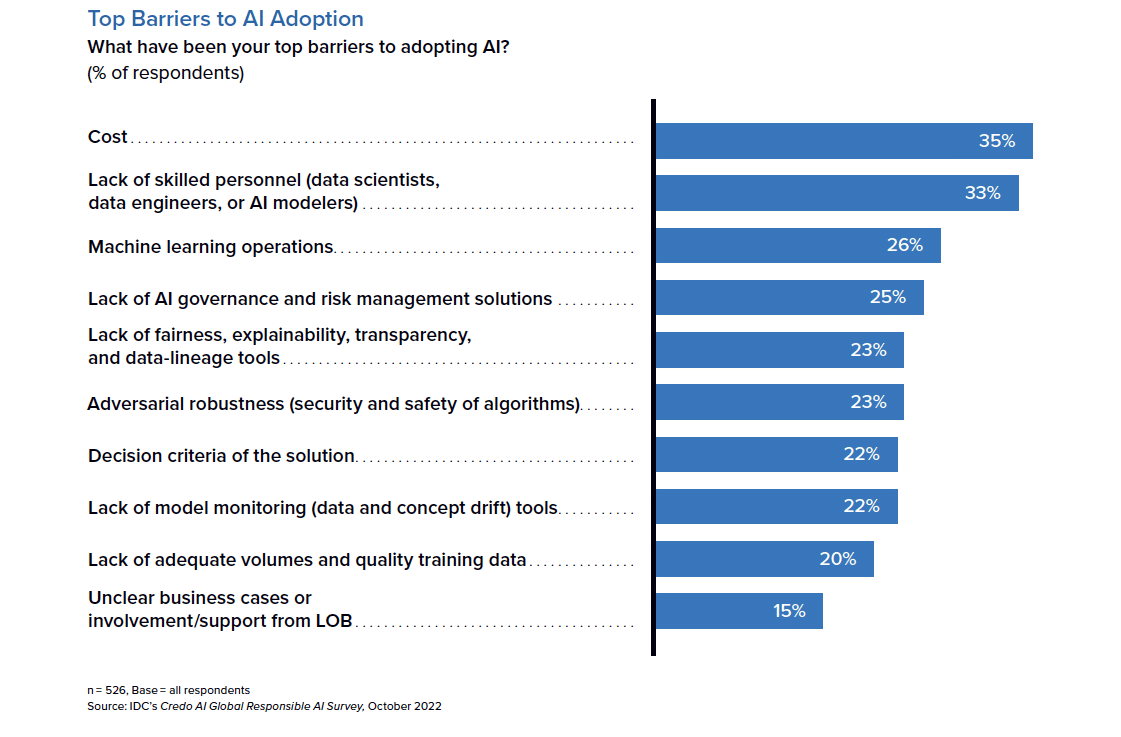

As helpful as it’s been with internal workflow like task automation, B2B companies (and B2C, for that matter) continue to struggle with using AI as a customer service tool—but with business buyers’ expectations now sky-high for seamless experiences, new research from market intelligence firm IDC asserts that getting the adoption of artificial intelligence right is one of the biggest opportunities for B2B enterprises in 2023.

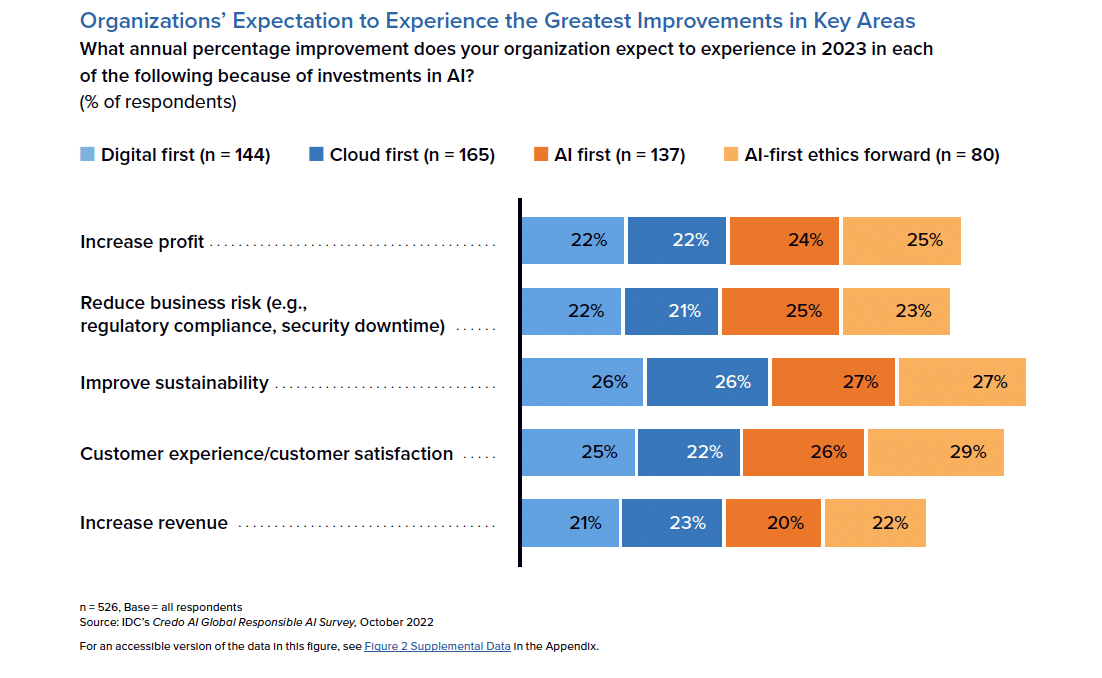

The firm’s new white paper, The Business Case for Responsible AI Governance: Unlocking Growth and Innovation While Managing Risk and Trust, sponsored by AI governance software firm Credo AI, suggests that organizations with an “AI-first, ethics-forward” approach expect significant business improvements, with an estimated significant YoY increase across various metrics including: increased revenue, heightened customer satisfaction, sustainable operations, higher profits, and reduced business risks.

The research provides valuable insights into the state of responsible AI adoption among B2B enterprise companies and identifies key challenges and opportunities.

Survey highlights:

Organizations find themselves facing an increasing complex and competitive reality, and it has become clear that embracing new technology is the only way to thrive in today’s modern business world.

The survey underscores the key role AI will have for B2B enterprises in 2023 and beyond. Executives expressed eagerness for their organizations to adopt responsible AI, citing customer satisfaction (30 percent), improved sustainability (30 percent) and increased profits (25 percent) as top expected business benefits.

Yet while there is a strong belief in the positives of AI adoption, the survey also shows that many executives have reservations or low confidence in moving forward with development and implementation—survey results reveal that only 39 percent have a very high level of confidence, 33 percent had some confidence with reservations, and 27 percent have very low confidence in building and using AI ethically, responsibly, and compliantly.

“Organizations around the world are eager to leverage the capabilities of AI, especially generative AI, but also recognize the importance of adopting these technologies responsibly to unlock lasting ROI,” said Navrina Singh, Founder and CEO of Credo AI, in a news release. “However, there are still significant challenges to overcome, particularly around building confidence in AI and ensuring compliance with regulations. This survey is designed to help organizations identify these challenges and provide actionable insights for implementing responsible AI practices now.”

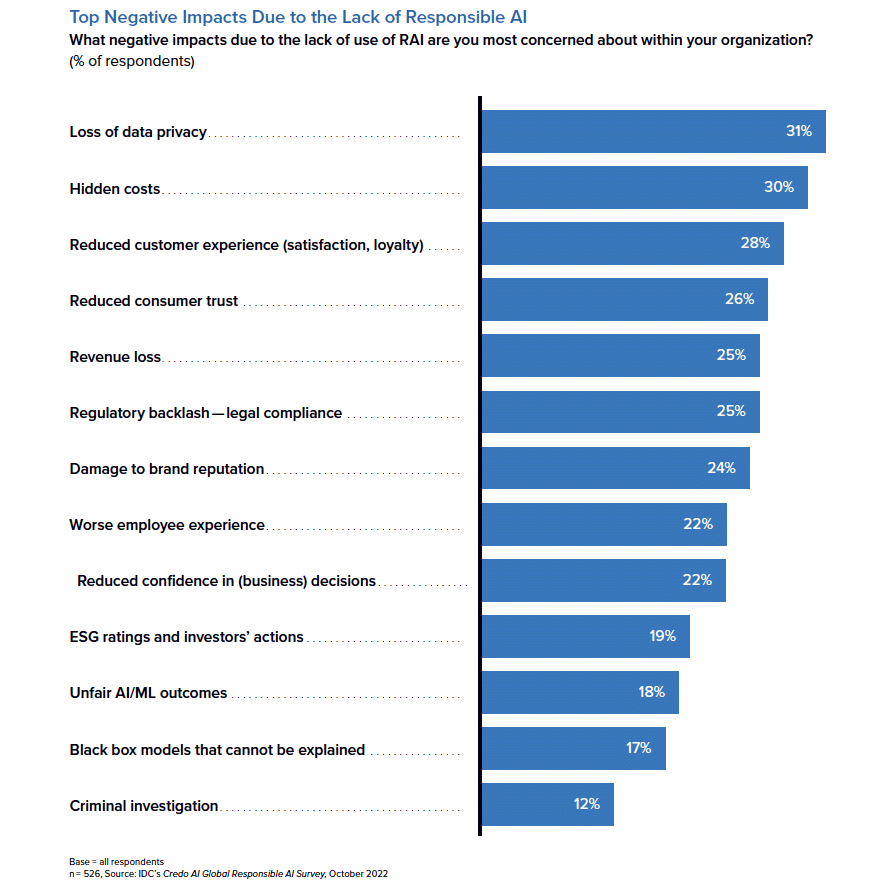

Key Insight I: Addressing concerns would jump-start AI initiatives

Despite the clear benefits of AI, many companies have yet to fully embrace it. Respondents of the survey shared that they feared negative impact due to not implementing AI responsibly with the right governance in place. Key concerns were loss of privacy data (31 percent), hidden costs (29 percent) and reduced customer trust (26 percent).

Key Insight II: CIOs are driving responsible AI implementation, focusing on the EU AI Act

Globally, CIOs are the primary owners of an organization’s responsible AI strategy. They can help businesses ensure that their AI systems produce fair outcomes, protect privacy, and comply with regulations.

The CIOs surveyed shared that the EU AI Act is the most critical regulation as they approach their implementations (42 percent). This is followed by the UK White Paper on AI (37%) and the American Privacy Protection Act (29 percent).

In step with increasing AI use, we will see an influx of new regulations developed to address AI’s potential negative impacts, but for now the survey makes it clear that the EU AI Act is noted as the most critical AI regulation for organizations to ensure compliance, with its provisions and requirements being widely recognized as the benchmark for Responsible AI implementation globally.

Key Insight III: Responsible AI stretches beyond setting up governance structures

Responsible AI can help businesses ensure that their AI systems produce fair outcomes, protect privacy, and comply with regulations. As a result, businesses can improve customer experience, increase trust in the brand, and build a positive reputation as a responsible organization.

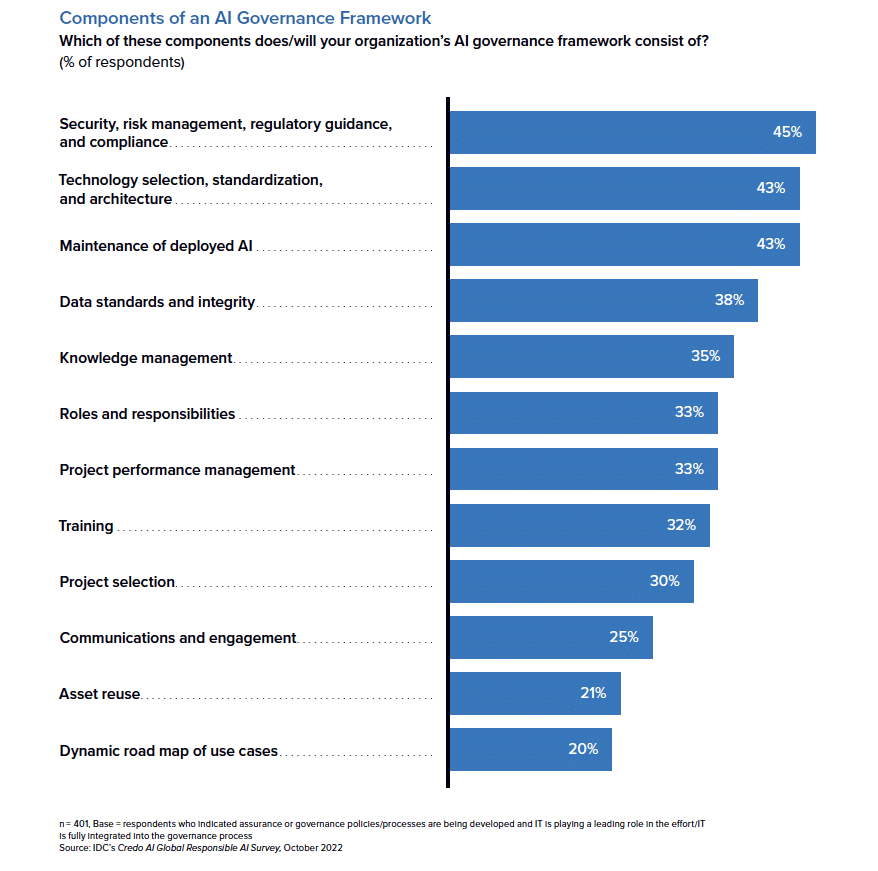

When asked what components would be included in an organization’s AI governance structure, respondents prioritized security, risk management, regulatory guidance and compliance (45 percent), followed by technology selection, standardization and architecture (43 percent).

Implementing and scaling AI responsibly is a difficult task that requires input from multiple stakeholders throughout an organization and its ecosystem. Success will be defined by aligning ethical and legal standards but also successfully integrating AI with the different software systems being used. The management of this is an area that is ripe for innovation.

“Responsible AI is the future of the industry and presents a wealth of opportunities for organizations,” said Ritu Jyoti, group vice president, AI and automation research practice global AI research lead at IDC, in the release. “Companies that prioritize ethical and compliant AI practices today will be better positioned to reap the benefits of improved customer satisfaction, sustainable operations, and increased profits tomorrow. The time to act is now to ensure better outcomes for both businesses and their customers.”

Download the full report here.

The survey, sponsored by Credo AI, was conducted by IDC in the fourth quarter of 2022, and included over 500 respondents from B2B enterprises globally.