As revolutionary as generative AI has been for business operations—obviously for communicators, but this is true for virtually all sectors—the impact of this quick adoption is consequently making it more difficult for brands and businesses to be responsible with the technology, and is putting pressure on Responsible AI (RAI) programs to keep up with rapid and continuous advances. New large-scale global research from MIT Sloan Management Review (MIT SMR) and Boston Consulting Group (BCG) serves as a warning for organizations to invest in these RAI programs now—or risk learning some catastrophic lessons the hard way.

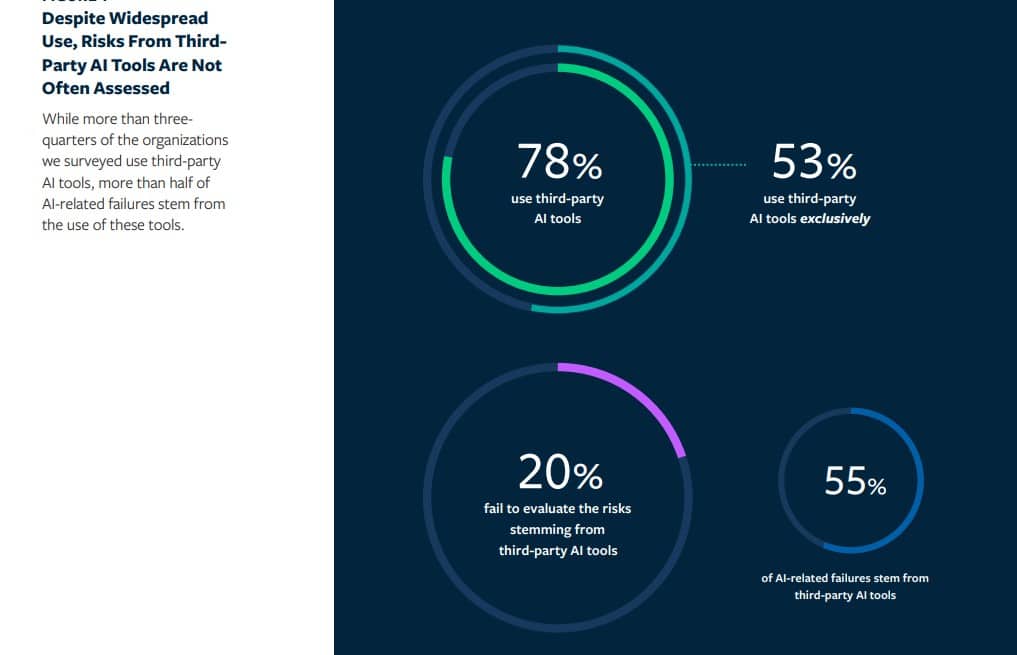

And if you think you needn’t worry because you’re not using AI tools of your own design, think again: the firms’ new report, Building Robust RAI Programs as Third-Party AI Tools Proliferate—based on a global survey of 1,240 respondents, representing organizations reporting at least $100 million in annual revenues, across 59 industries and 87 countries—finds that more than half (53 percent) of companies rely exclusively on third-party AI tools, having no internally designed or developed AI of their own—but more than half (55 percent) of all AI-related failures stem from third-party AI tools.

“The AI landscape, both from a technological and regulatory perspective, has changed so dramatically since we published our report last year,” said Elizabeth M. Renieris, MIT SMR guest editor and coauthor of the report, in a news release. “In fact, with the sudden and rapid adoption of generative AI tools, AI has become dinner table conversation. And yet, many of the fundamentals remain the same. This year, our research reaffirms the urgent need for organizations to be responsible by investing in and scaling their RAI programs to address growing uses and risks of AI.”

Both RAI leaders and non-leaders need to step up

RAI leaders have increased from 16 percent of the survey sample to 29 percent year-over-year. But despite this progress, 71 percent of organizations remain non-leaders. With significant risks emerging from third-party AI tools, it’s time for these organizations to double down on their RAI efforts.

A widespread reliance on third-party AI leaves companies at great risk

The vast majority (78 percent) of organizations surveyed are highly reliant on third-party AI, exposing them to a host of risks, including reputational damage, the loss of customer trust, financial loss, regulatory penalties, compliance challenges, and litigation. Still, one-fifth of organizations that use third-party AI tools fail to evaluate their risks at all.

Employing a wide variety of approaches and methods to evaluate third-party tools is an effective strategy for mitigating risk. Organizations that employ seven different methods are more than twice as likely to uncover lapses as those that only use three (51 percent vs. 24 percent). These approaches include contractual language mandating adherence to RAI principles, vendor pre-certification and audits, internal product-level reviews, and adherence to relevant regulatory requirements and industry standards.

An AI regulatory landscape is rapidly taking shape

The regulatory landscape is evolving almost as rapidly as AI itself, with many new AI-specific regulations taking effect on a rolling basis. About half (51 percent) of those surveyed report being subject to non-AI-specific regulations that nevertheless apply to their use of AI, including a high proportion of organizations in the financial services, insurance, healthcare, and public sectors. Those subject to such regulations account for 13 percent more RAI leaders than those that are not. They also report detecting fewer AI failures than do their counterparts that are not subject to the same regulatory pressures (32 percent vs. 38 percent).

CEO engagement is vital in affirming a company’s commitment to RAI

CEOs play a key role in both affirming an organization’s commitment to AI and sustaining the necessary investments in it. Organizations with a CEO who takes a hands-on role in RAI efforts (such as by engaging in RAI-related hiring decisions or product-level discussions or setting performance targets tied to RAI) report 58 percent more business benefits than do organizations with a less hands-on CEO, regardless of their leader status. Furthermore, organizations with a CEO who is directly involved in RAI are more likely to invest in RAI than are organizations with a hands-off CEO (39 percent vs. 22 percent).

Five recommendations for navigating a dramatically changing AI landscape

The report outlines five recommendations for organizations as they navigate the rapid adoption of AI and the inherent risks associated with it:

- Move quickly to mature RAI programs

- Properly evaluate third-party tools

- Take action to prepare for emerging regulations

- Engage CEOs in RAI efforts to maximize success

- Double down and invest in RAI

“Now is the time for organizations to double down and invest in a robust RAI program,” said Steven Mills, chief AI ethics officer at BCG and coauthor of the report, in the release. “While it may feel as though the technology is outpacing your RAI program’s capabilities, the solution is to increase your commitment to RAI, not pull back. Organizations need to put leadership and resources behind their efforts to deliver business value and manage the risks.”