As powerful new generative AI tools and continuous improvements to automation are rolled out at an increasingly rapid pace—causing some to fear that their human skills will become unnecessary sooner than later—a new research report finds that one of the biggest obstacles for brand and business success with AI is not having enough human involvement and oversight throughout the entire ML cycle.

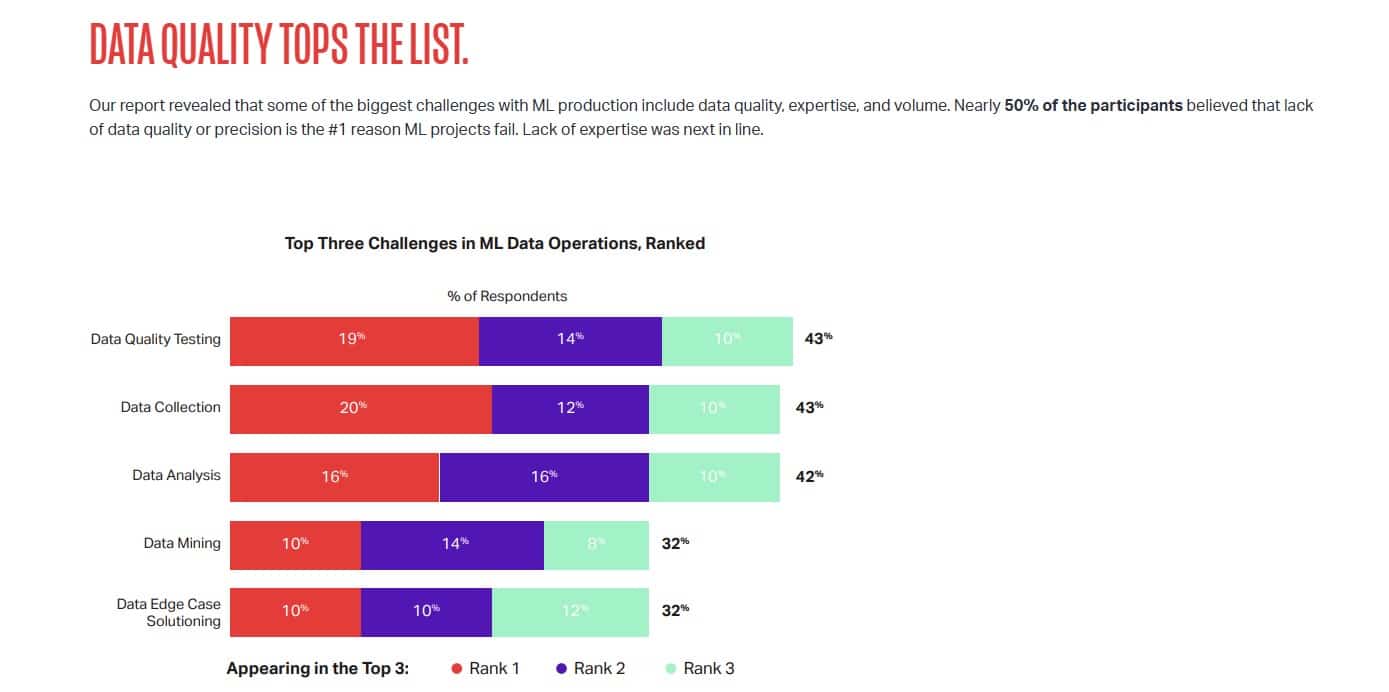

The new 2023 State of ML Ops report from data solutions firm iMerit, which surveyed AI, ML, and data practitioners across industries, found that an increasing need for better data quality is still the biggest hindrance for AI as a business tool, but right behind that is the need for better human expertise in delivering successful AI outcomes.

The world of AI has changed dramatically over the past year

It has evolved out of the lab, entering the phase where deploying large-scale commercialized projects is a reality. The new study shows true experts in the loop are needed not only at the data phase, but at every phase along the ML Ops lifecycle. The world’s most experienced AI practitioners understand that companies turning to human experts achieve greater efficiencies, better automation, and superior operational excellence, which leads to better commercial outcomes with AI in the future.

“Quality data is the lifeblood of AI and it will never have sufficient data quality without human expertise and input at every stage,” said Radha Basu, founder and CEO at iMerit, in a news release. “With the acceleration of AI through large language models and other generative AI tools, the need for quality data is growing. Data must be more reliable and scalable for AI projects to be successful. Large language models and generative AI will become the foundation on which many thin applications will be built. Human expertise and oversight is a critical part of this foundation.”

The report highlights survey findings in four key areas:

Data quality is the most important factor for successful commercial AI projects

Three in five AI/ML practitioners consider higher quality data to be more important than higher volumes of data for achieving successful AI. Additionally, practitioners found that accurate and precise data labeling is crucial to realizing ROI.

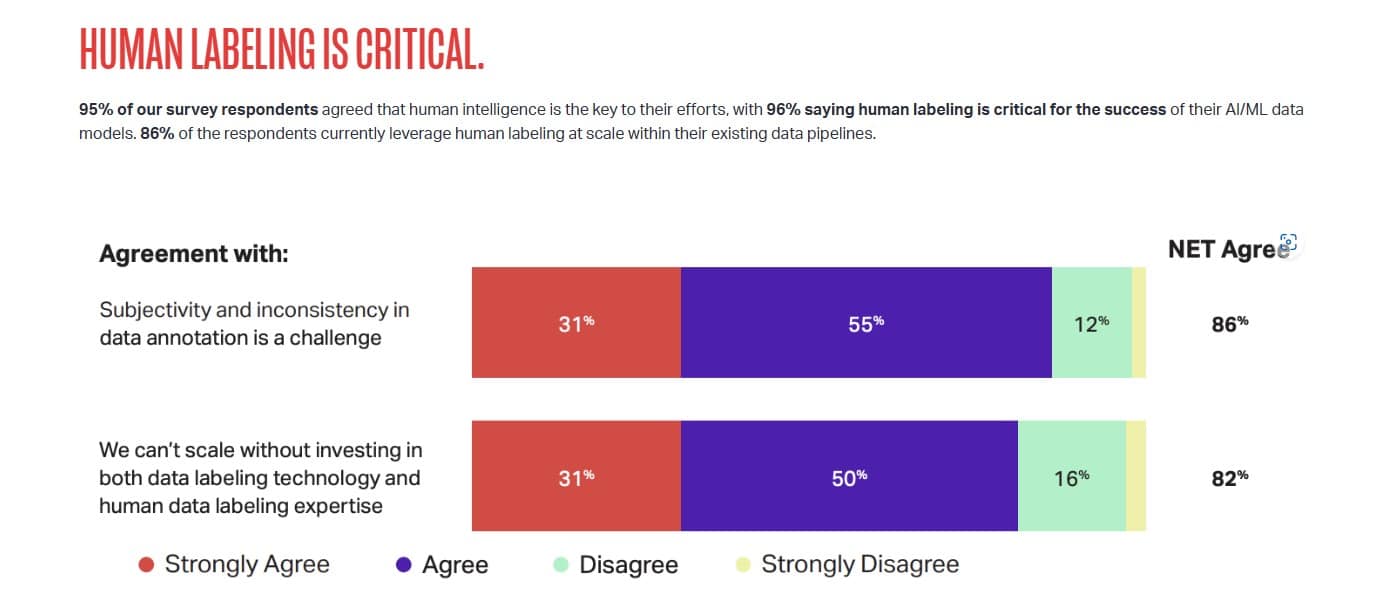

Human expertise is central to the AI equation

Nearly all (96 percent) survey respondents indicated that human expertise is a key component to their AI efforts, while 86 percent of respondents claim that human labeling is essential, and they are using expert-in-the-loop training at scale within existing projects. The use of automated data labeling is growing in popularity, and there is still need for human oversight, as the report finds that on average 42 percent of automated data labeling requires human intervention or correction.

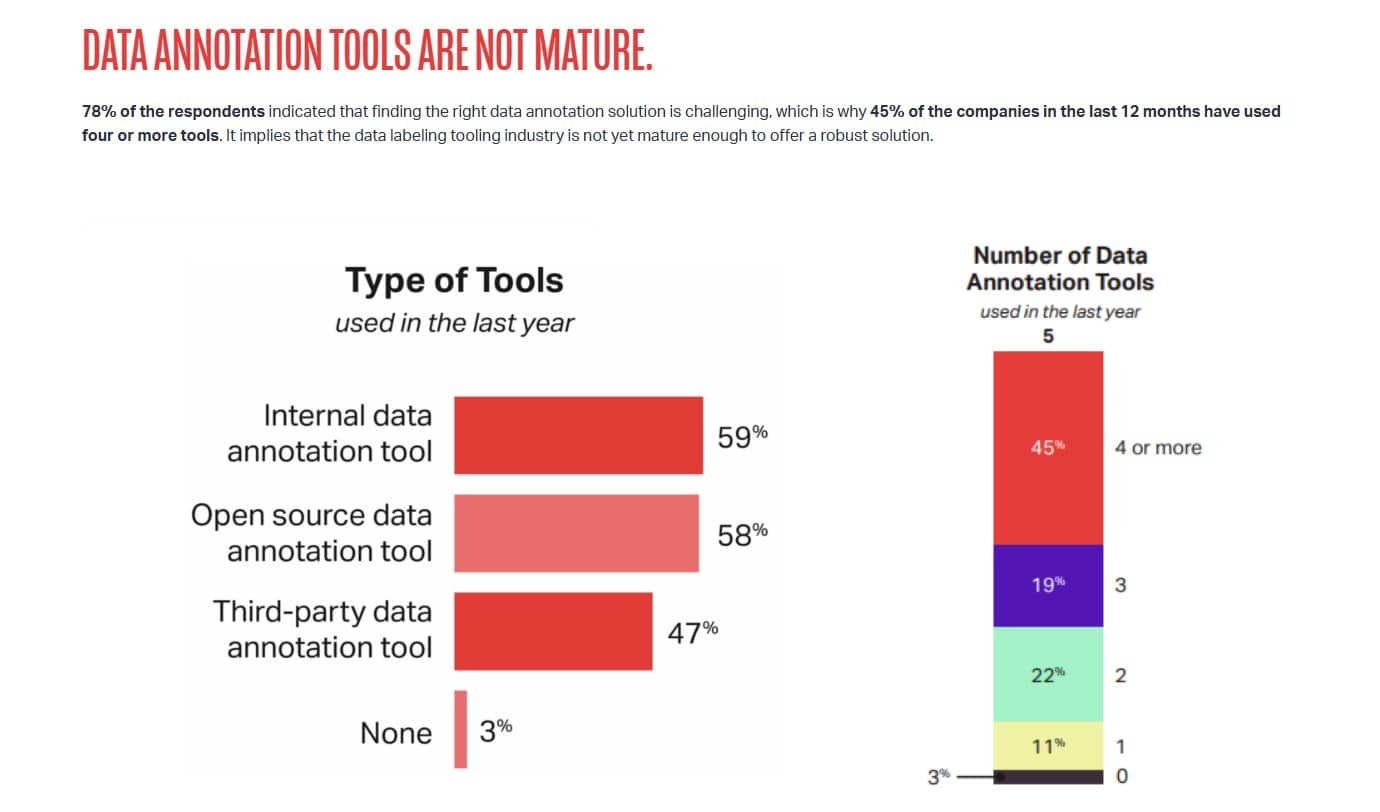

Data annotation requirements are increasing in complexity, which increases the need for human expertise and intervention

According to the study, a large majority of respondents (86 percent) indicated subjectivity and inconsistency are the primary challenges for data annotation in any ML model. Another 82 percent reported that scaling wouldn’t be possible without investing in both automated annotation technology such as Kognic and human data labeling expertise. And 65 percent of respondents also stated that a dedicated workforce with domain expertise was required for successful AI-ready data.

The key to commercial AI is solving edge cases with human expertise

Edge cases are consuming a large amount of time. The report finds that 37 percent of AI/ML practitioners’ time is spent identifying and solving edge cases. And virtually all (96 percent) of survey respondents stated that human expertise is required to solve edge cases.

The full 2023 State of ML Ops report can be found here.