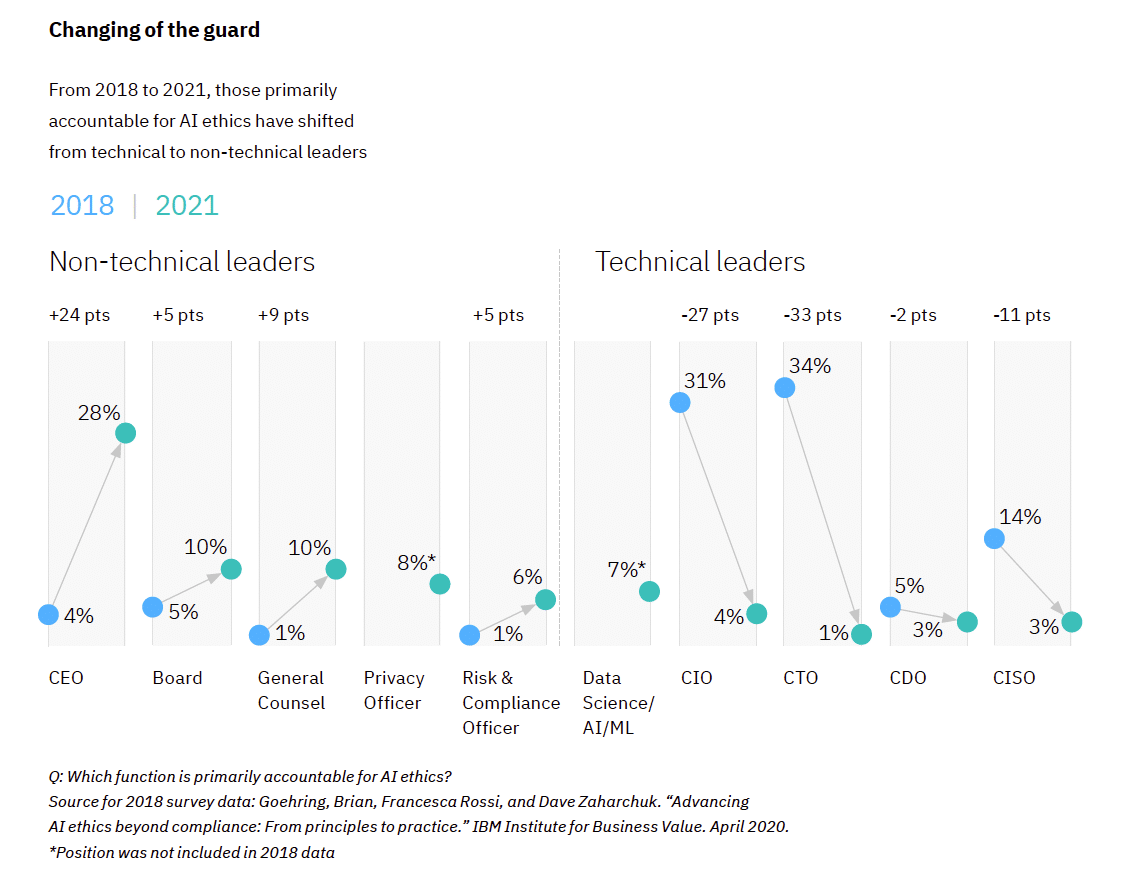

When asked which function is primarily accountable for AI ethics in a new survey from IBM’s Institute for Business Value (IBV), 80 percent of respondents pointed to a non-technical executive, such as a CEO, as the primary “champion” for AI ethics, a sharp uptick from 15 percent in 2018—revealing a radical shift in the roles responsible for leading and upholding AI ethics at an organization.

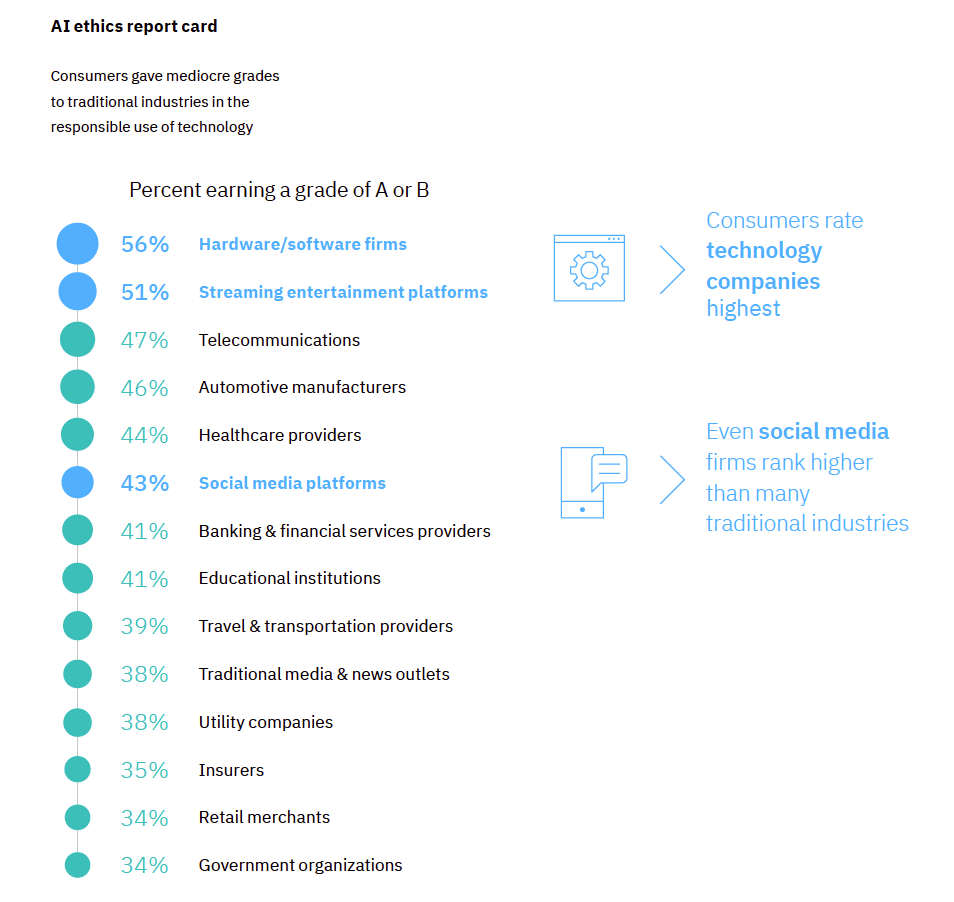

The firm’s global study also indicates that despite a strong imperative for advancing trustworthy AI, including better performance compared to peers in sustainability, social responsibility, and diversity and inclusion, there remains a gap between leaders’ intention and meaningful actions.

The study found:

Business executives are now seen as the driving force in AI ethics

- CEOs (28 percent)—but also Board members (10 percent), General Counsels (10 percent), Privacy Officers (8 percent), and Risk & Compliance Officers (6 percent) are viewed as being most accountable for AI ethics by those surveyed.

- While 66 percent of respondents cite the CEO or other C-level executive as having a strong influence on their organization’s ethics strategy, more than half cite board directives (58 percent) and the shareholder community (53 percent).

Building trustworthy AI is perceived as a strategic differentiator and organizations are beginning to implement AI ethics mechanisms.

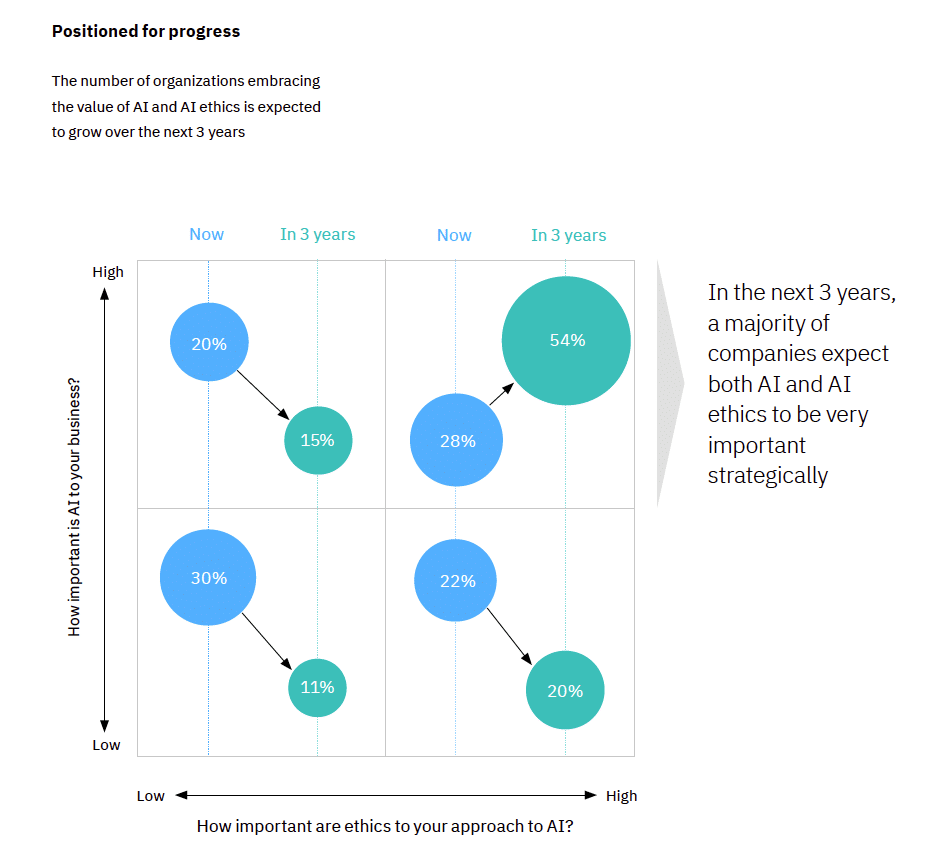

- More than three-quarters of business leaders surveyed this year agree AI ethics is important to their organizations, up from about 50 percent in 2018.

- At the same time, 75 percent of respondents believe ethics is a source of competitive differentiation, and more than 67 percent of respondents that view AI and AI ethics as important indicate their organizations outperform their peers in sustainability, social responsibility, and diversity and inclusion.

- Many companies have started making strides. In fact, more than half of respondents say their organizations have taken steps to embed AI ethics into their existing approach to business ethics.

- More than 45 percent of respondents say their organizations have created AI-specific ethics mechanisms, such as an AI project risk assessment framework and auditing/review process.

Ensuring ethical principles are embedded in AI solutions is an urgent need for organizations, but progress is still too slow

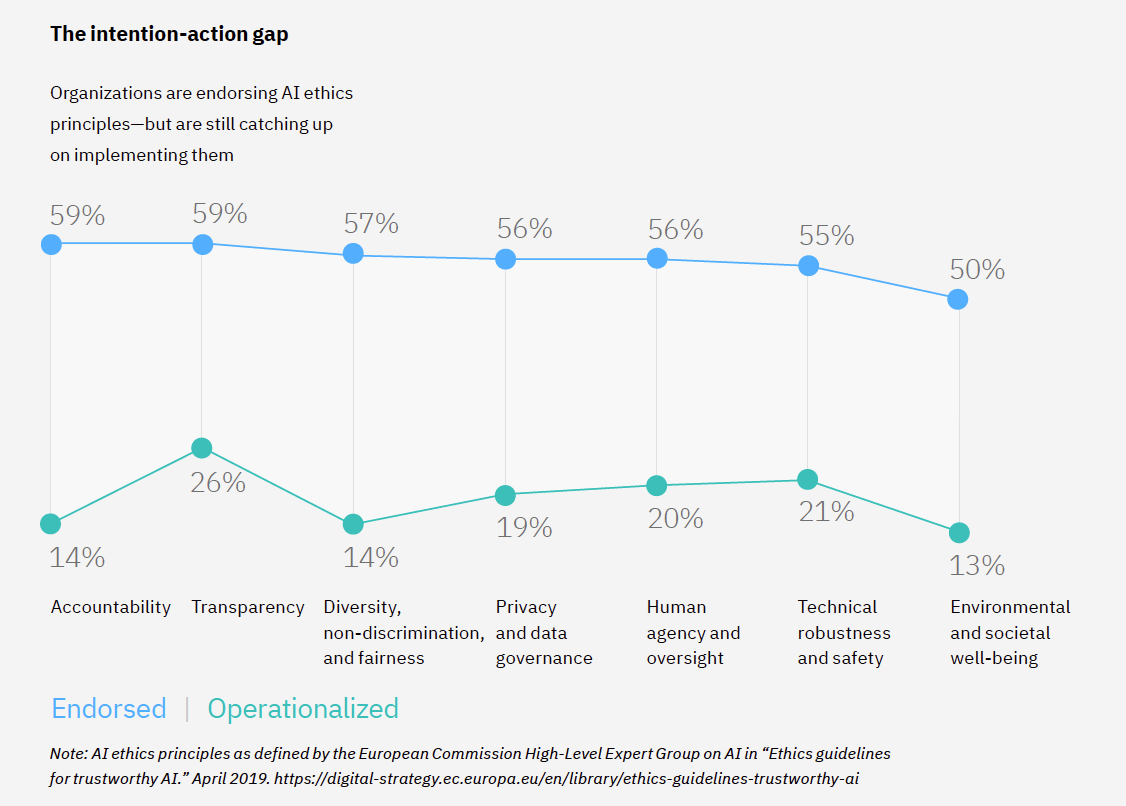

- More surveyed CEOs (79 percent) are now prepared to embed AI ethics into their AI practices—up from 20 percent in 2018—and more than half of responding organizations have publicly endorsed common principles of AI ethics.

- Yet, less than a quarter of responding organizations have operationalized AI ethics, and fewer than 20 percent of respondents strongly agreed that their organization’s practices and actions match (or exceed) their stated principles and values.

- 68 percent of surveyed organizations acknowledge that having a diverse and inclusive workplace is important to mitigating bias in AI, but findings indicate that AI teams are still substantially less diverse than their organizations’ workforces: 5.5 times less inclusive of women, 4 times less inclusive of LGBT+ individuals and 1.7 times less racially inclusive.

“As many companies today use AI algorithms across their business, they potentially face increasing internal and external demands to design these algorithms to be fair, secured and trustworthy; yet, there has been little progress across the industry in embedding AI ethics into their practices,” said Jesus Mantas, global managing partner at IBM Consulting, in a news release. “Our IBV study findings demonstrate that building trustworthy AI is a business imperative and a societal expectation, not just a compliance issue. As such, companies can implement a governance model and embed ethical principles across the full AI life cycle.”

The time for companies to act is now

The study data suggests that those organizations who implement a broad AI ethics strategy interwoven throughout business units may have a competitive advantage moving forward. The study provides recommended actions for business leaders including:

- Take a cross-functional, collaborative approach: Ethical AI requires a holistic approach, and a holistic set of skills across all stakeholders involved in the AI ethics process. C-Suite executives, designers, behavioral scientists, data scientists, and AI engineers each have a distinct role to play in the trustworthy AI journey.

- Establish both organizational and AI lifecycle governance to operationalize the discipline of AI ethics: Take a holistic approach to incentivizing, managing and governing AI solutions across the full AI lifecycle, from establishing the right culture to nurture AI responsibly, to practices and policies to products.

- Reach beyond your organization for partnership: Expand your approach by identifying and engaging key AI-focused technology partners, academics, startups, and other ecosystem partners to establish “ethical interoperability.”

Download the full report here.

The IBV study, “AI ethics in action: An enterprise guide to progressing trustworthy AI,” surveyed 1,200 executives in 22 countries across 22 industries to understand where executives stand on the importance of AI ethics and how organizations are operationalizing it. The study was conducted in cooperation with Oxford Economics in 2021.