New research from data analytics solutions firm Ocient uncovers key trends around how organizations are managing the shift from big-data volumes toward ingesting, storing and analyzing hyperscale data sets, which include trillions of data records, and the expected technical requirements and business results from that shift.

The firm’s new report, Beyond Big Data: The Rise of Hyperscale, is based on a survey conducted in May 2022 by Propeller Insights.

Extraordinary data growth

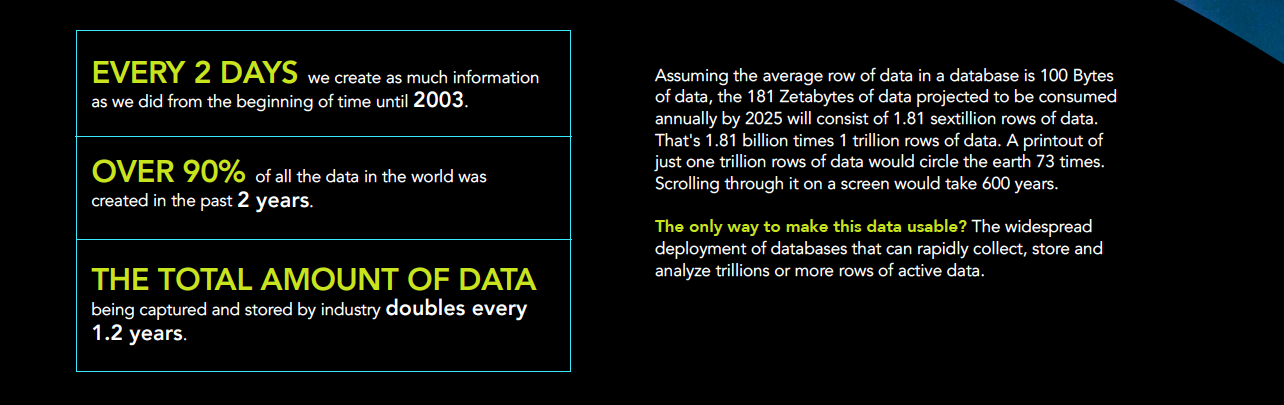

Data is growing at an extraordinary rate. “The Global DataSphere is expected to more than double in size from 2022 to 2026,” said John Rydning, research vice president of the IDC Global DataSphere, a measure of how much new data is created, captured, replicated, and consumed each year, in a news release. “The Enterprise DataSphere will grow more than twice as fast as the Consumer DataSphere over the next five years, putting even more pressure on enterprise organizations to manage and protect the world’s data while creating opportunities to activate data for business and societal benefits.”

IDC Global DataSphere research also documented that “in 2020, 64.2 zettabytes of data was created or replicated” and forecasted that “global data creation and replication will experience a compound annual growth rate (CAGR) of 23 percent over the 2020-2025 forecast period.” At that rate, more than 180 zettabytes—that’s 180 billion terabytes—will be created in 2025.

The survey respondents reflect forecasts of such exponential data growth. When asked how fast the volume of data managed by their organization will grow over the next one to five years, 97 percent of respondents answered “fast” to “very fast,” with 72 percent of C-level executives expecting the volume to grow “very fast” over the next five years.

Barriers to supporting data growth and hyperscale analytics

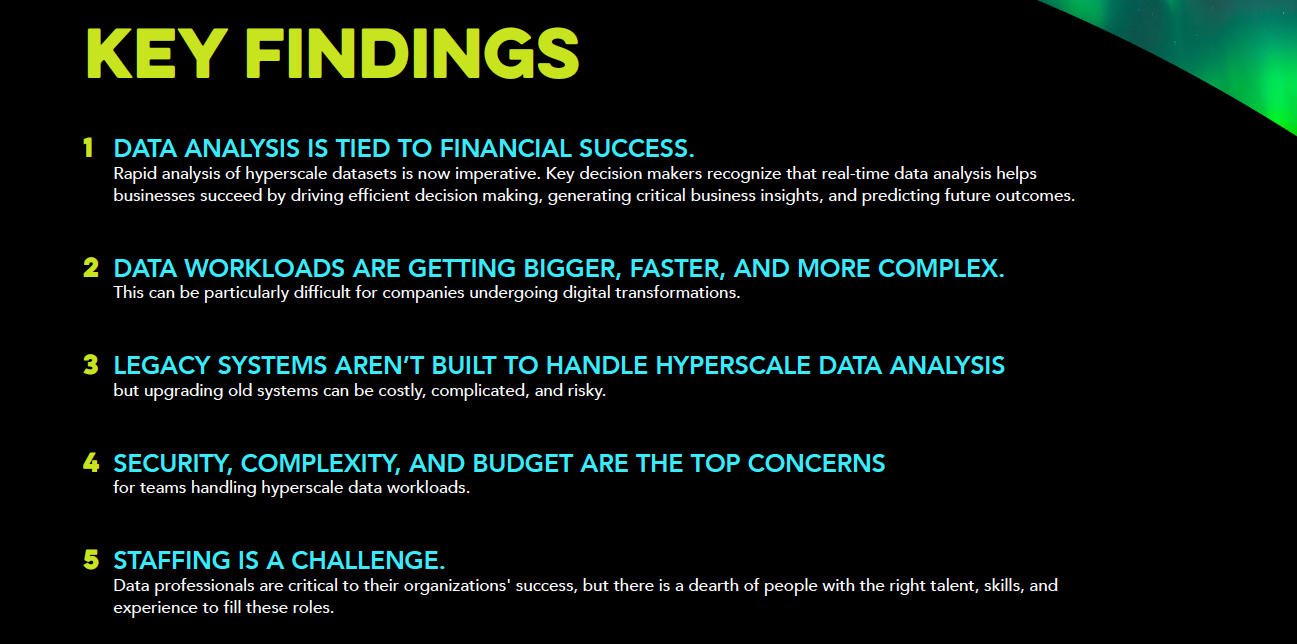

To support such tremendous data growth, 98 percent of respondents agreed it’s somewhat or very important to increase the amount of data analyzed by their organizations in the next one to three years. However, respondents are experiencing barriers to harnessing the full capacity of their data and cited these top three limiting factors:

- The volume of data is growing too fast (62 percent total, 65 percent C-level)

- There is a lack of talent to analyze the data (49 percent total, 47 percent C-level)

- Current solutions are not flexible enough (49 percent total, 34.8 percent C-level)

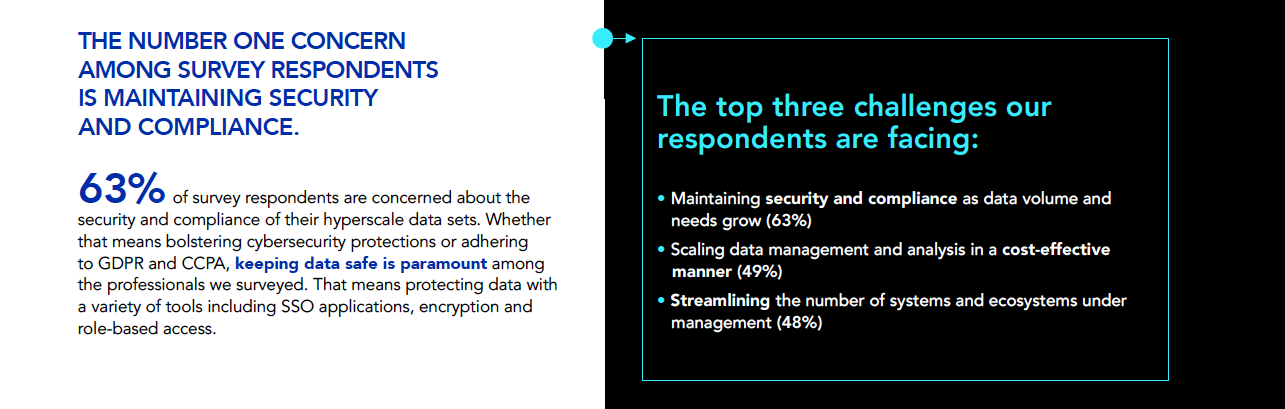

When asked about their biggest data analysis pain points today, security and risk ranked first among C-level respondents (68 percent), with metadata and governance (41 percent) and slow data ingestion (31 percent) being two other top concerns. When scaling data management and analysis within their organization, 63 percent said maintaining security and compliance as data volume and needs grow was a challenge they are currently facing.

Survey respondents also indicated legacy systems are another source of pain and a barrier to supporting data growth and hyperscale analytics. When asked if they plan to switch data warehousing solutions, more than 59 percent of respondents answered “yes,” with 46% of respondents citing a legacy system motivating them to switch. When ranking their most important considerations in choosing a new data warehouse technology, “modernizing our IT infrastructure” was ranked number one.

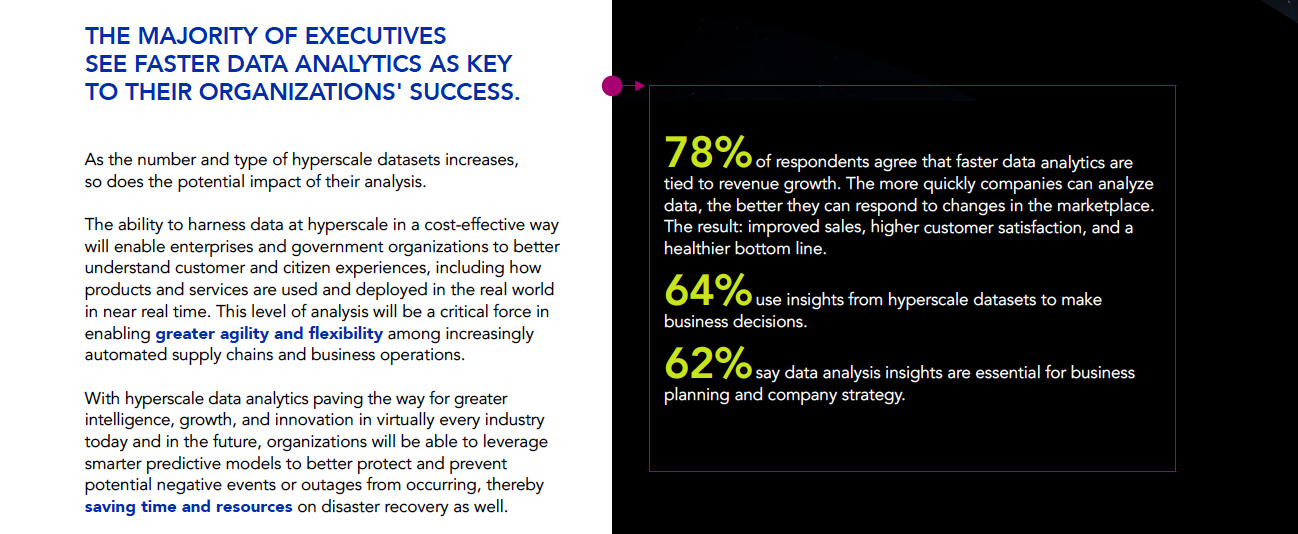

Faster data analytics improve decisions, revenue and success

The survey respondents believe hyperscale data analytics is crucial to their success. Sixty-four percent of respondents indicate hyperscale data analytics provides important insights used to make better business decisions, and 62 percent said it is essential for planning and strategy.

The survey respondents also indicated there is a strong relationship between implementing faster data analytics and growing the company’s bottom line. When asked about this relationship, an overwhelming 78 percent of respondents agreed there is a definite relationship. For the C-level audience, more than 85 percent cited the relationship.

“Data analysis is no longer a ‘nice-to-have’ for organizations. Hyperscale data intelligence has become a mission-critical component for modern enterprises and government agencies looking to drive more impact and grow their bottom line. With the rapid pace of growth, it’s imperative for enterprises and government agencies to enhance their ability to ingest, store, and analyze fast-growing data sets in a way that is secure and cost effective,” said Chris Gladwin, co-founder and CEO at Ocient, in the release. “The ability to migrate from legacy systems and buy or build new data analysis capabilities for rapidly growing workloads will enable enterprises and government organizations to drive new levels of agility and growth that were previously only imaginable.”

Download the full report here.

The report is based on a survey of 500 data and technology leaders, across a variety of industries, who are managing active data workloads of 150 terabytes or more. Respondents include partners, owners, presidents, C-level executives, vice presidents and directors in many industries including technology, manufacturing, financial services, retail, and government. Their organizations’ annual revenue ranges from $50 million to more than $5 billion. Approximately 50% of respondents represent companies with annual revenue greater than $500 million.