Although fully 100 percent of senior executives agree there are risks associated with using artificial intelligence, just 4 percent of respondents in a new survey consider these risks to be “significant,” according to new research from global law firm Baker McKenzie. Companies in the US may be bullish on using AI, but many executives are ambivalent about its associated risks—especially when it comes to AI-enabled hiring and people management tools.

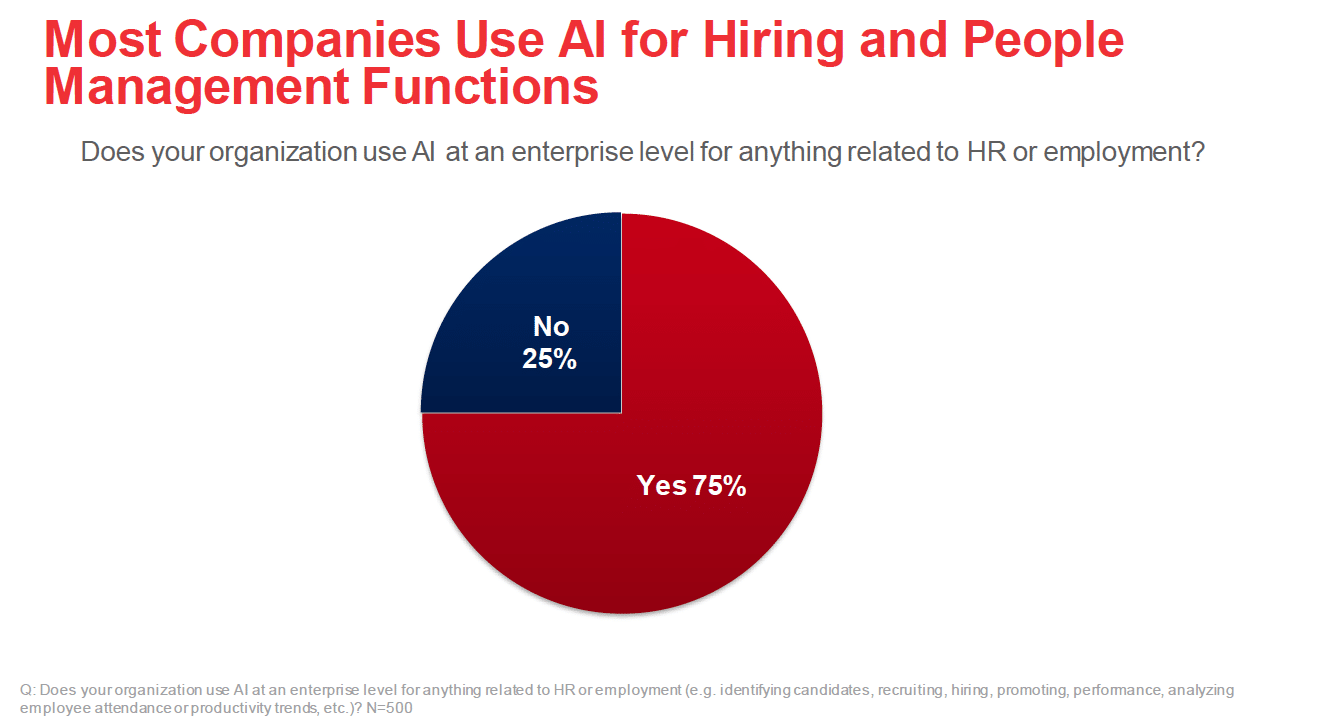

Three fourths of those surveyed indicate their organization uses AI for key human resources (HR) management and employment functions—for example, recruiting and hiring, performance and promotion, and analyzing employee attendance or productivity trends.

The firm’s new report, Risky Business: Identifying Blind Spots in Corporate Oversight of Artificial Intelligence, queried 500 US based C-level executives who self-identified as part of the decision-making team responsible for their organization’s adoption, use and management of AI-enabled tools.

“There is an extraordinary amount of potential downside risk in using AI algorithms for people management functions, and a clear disconnect in developing and managing HR-specific, AI-enabled applications,” said Bradford Newman, Palo Alto-based litigation partner at Baker McKenzie who leads the firm’s North America machine learning and AI practice and chair of the American Bar Association Business and Commercial Litigation AI Subcommittee, in a news release. “Given the increase in state legislation and regulatory enforcement, companies need to step up their game when it comes to AI oversight and governance to ensure their AI is ethical and protect themselves from liability by managing their exposure to risk accordingly.”

HR, hiring and potential algorithm bias

Despite HR’s heavy reliance on AI-enabled tools, HR executives are not the key decision-makers for the management of these technologies. Instead, information technology and information security departments take the lead on these decisions. More than half (54 percent) of survey respondents said their organization involves HR, and far fewer indicated that operations or legal are consulted (42 percent and 27 percent respectively).

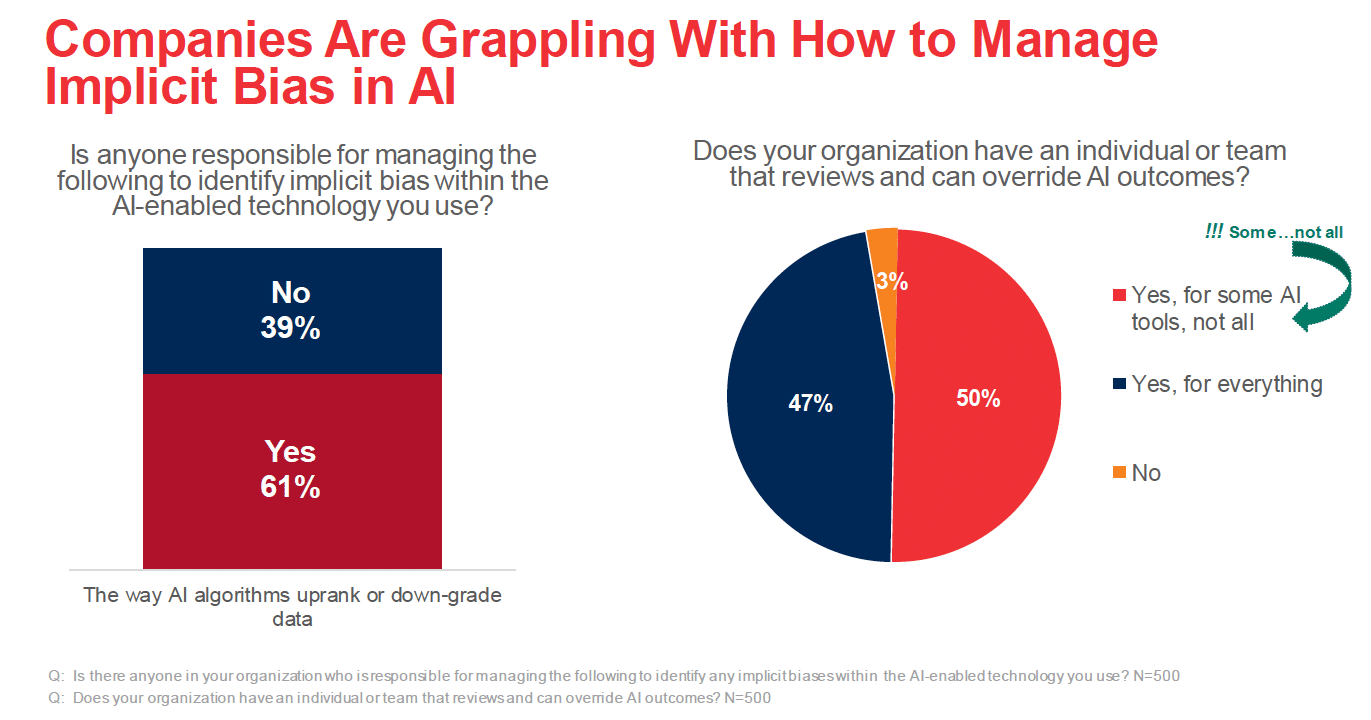

When it comes to managing implicit bias, 40 percent of respondents said they have no oversight into the way AI algorithms uprank or downgrade data. Though companies have the capability to override some AI-enabled outcomes, less than half (47 percent) can do so with all their AI tools.

“It’s not surprising that chief technologists play a significant role in a company’s AI oversight, what is concerning is the lack of involvement by other key stakeholders—especially the legal and human resource functions,” said George Avraam, chair of Baker McKenzie’s North America Employment & Compensation Practice, in the release. “Senior HR executives have a deep understanding of how bias can adversely impact a workforce and together with their colleagues in legal, should have a bigger role in the development and oversight of the AI tools their companies use.”

The technology department’s lead role in AI oversight is not just limited to HR tools. According to the survey, the technology department is also most frequently tapped to oversee the enterprise-wide deployment of AI tools, while the legal department is the least likely to be involved.

AI corporate governance and oversight is needed

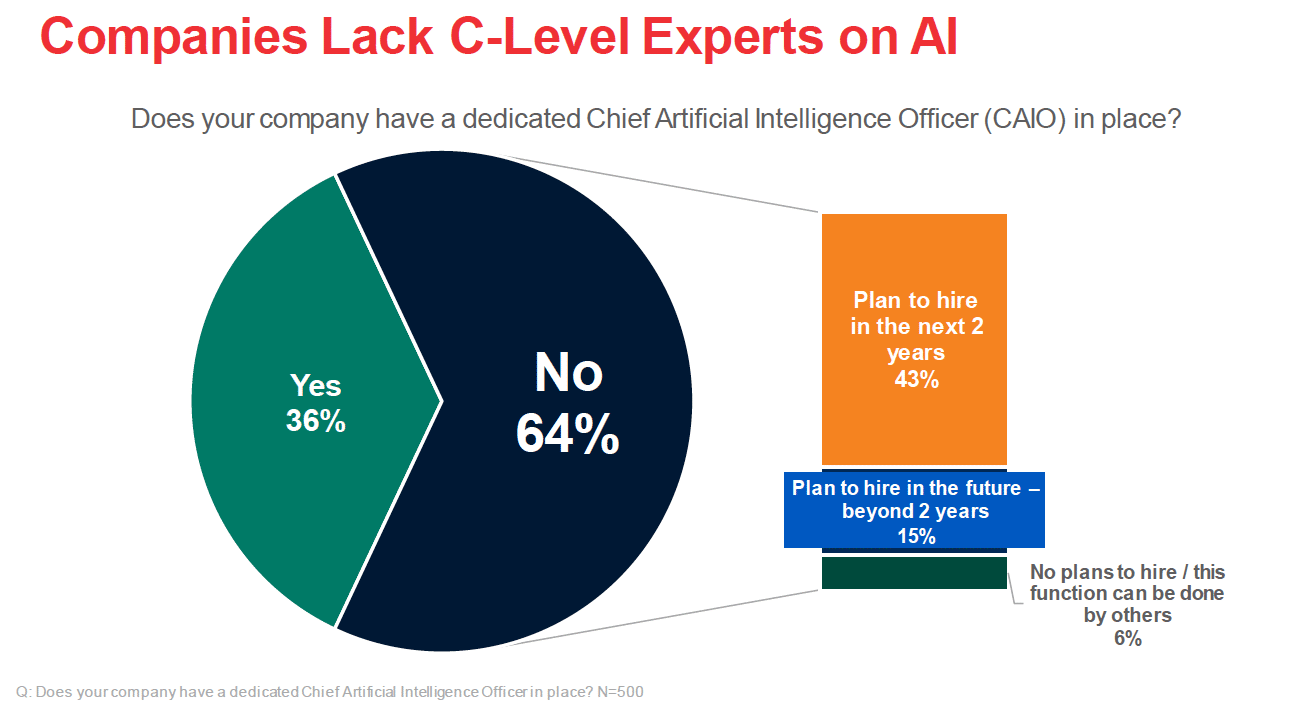

Despite the significance of AI to large enterprises, only 36 percent currently have a Chief Artificial Intelligence Officer (CAIO) in place. The 64 percent currently lacking CAIOs are in no rush to hire or appoint one: 58 percent say they will likely hire one at some point, while 6 percent do not believe they need one.

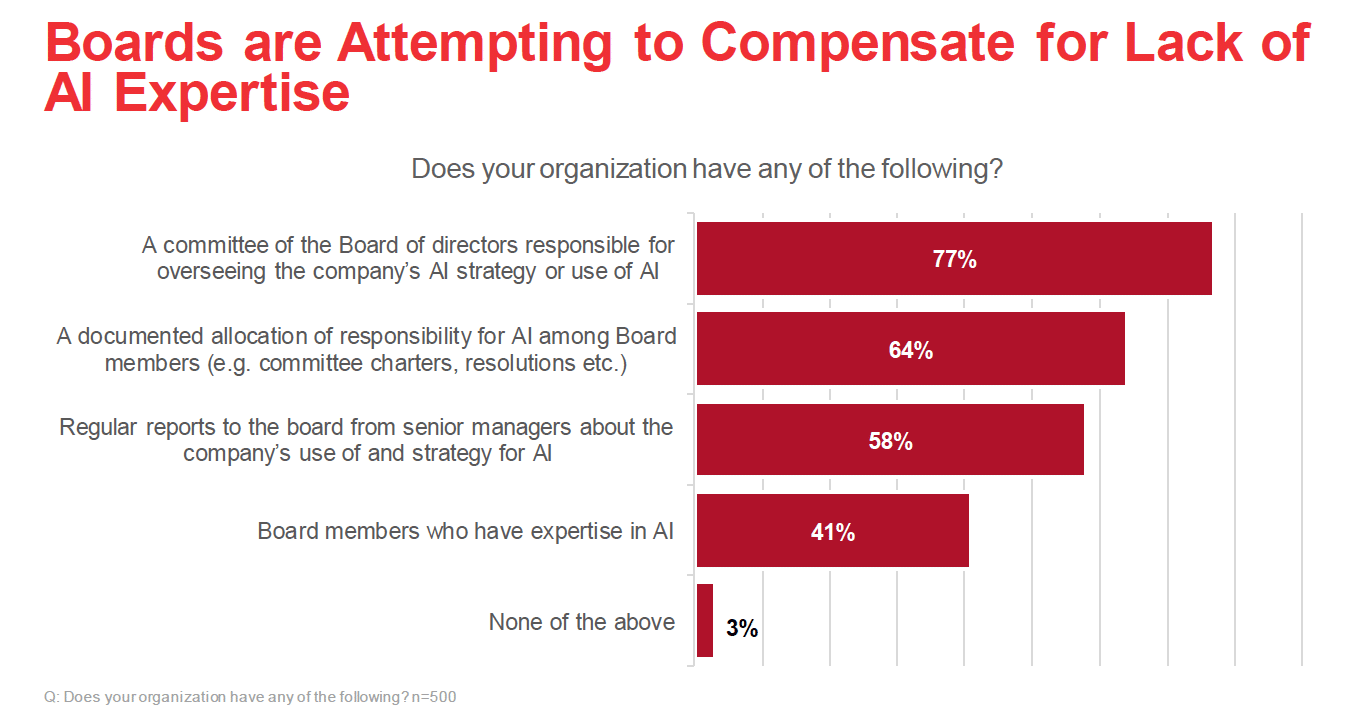

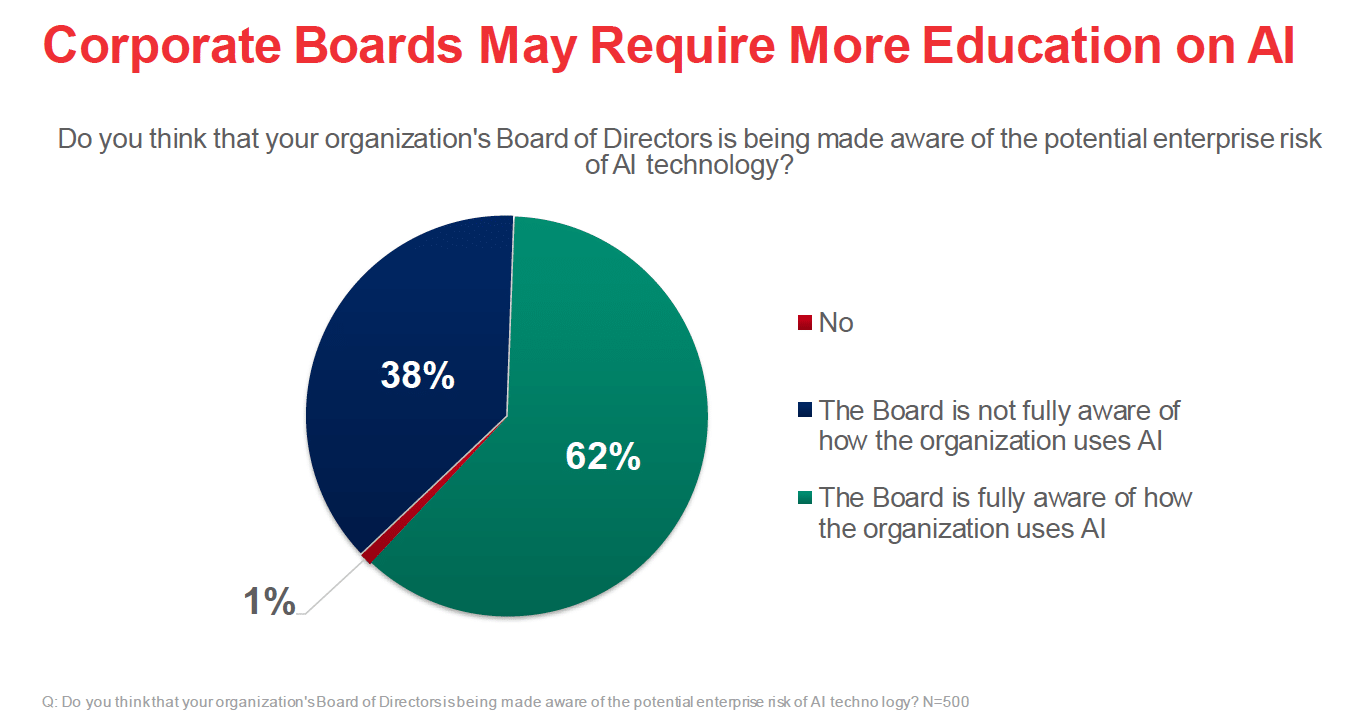

The need for a CAIO may be overdue: just 41 percent of respondents said they have AI expertise at the Board level. Even so, 77 percent of respondents say their organization’s Board of Directors currently has a committee responsible for overseeing the use of AI across the enterprise.

“Today’s companies have a lot to learn about the risks associated with using AI, but we know that CAIOs will play a critical role, regardless of industry, in the years to come,” said Pamela Church, chair of Baker McKenzie’s North America Intellectual Property & Technology Practice, in the release. “The CAIO’s oversight should span technology and functional departments, ensuring that the organization has a holistic view of the AI risks inherent to the technology they are using.”

Artificial confidence: Past performance is no guarantee of future results

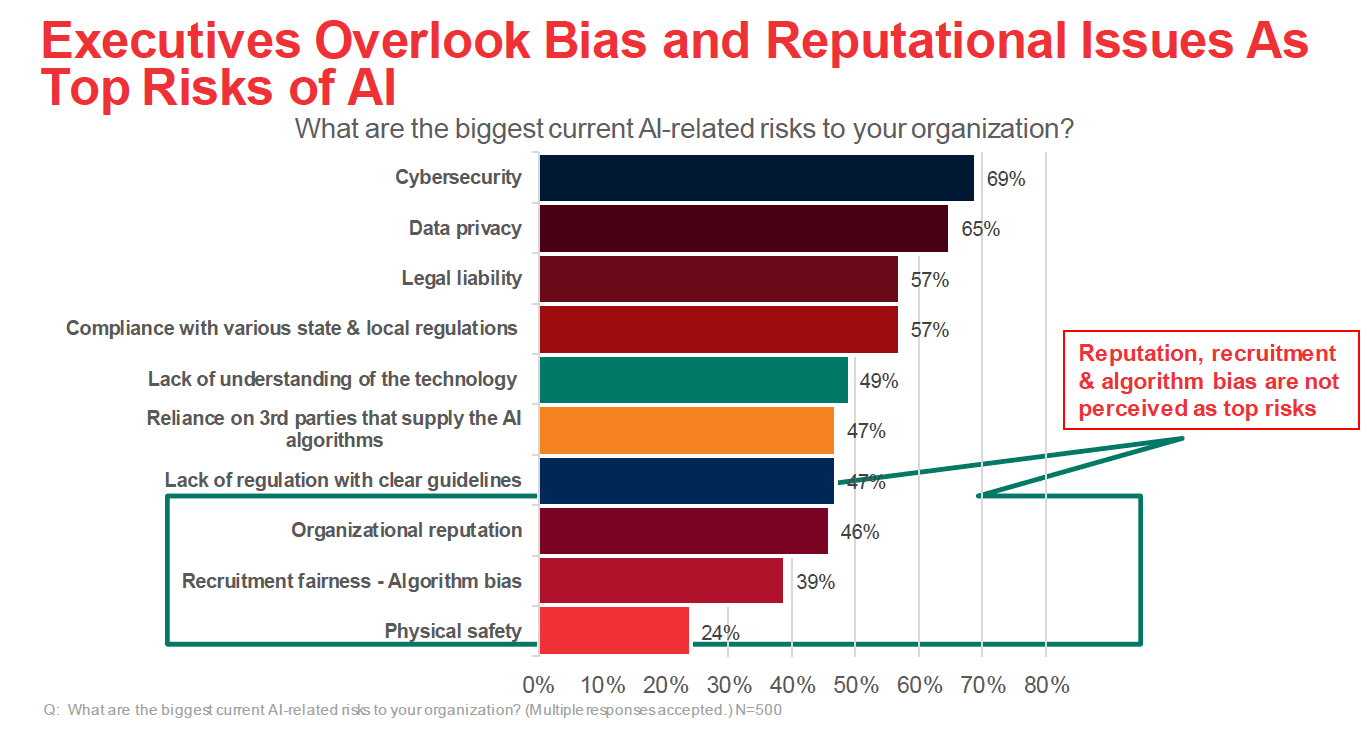

Executives surveyed believe that the top organizational risks to using AI-enabled tools are cybersecurity and data privacy but considered risks around corporate reputation and algorithm bias to be significantly less concerning.

Additionally, 24 percent of executives surveyed report that corporate policies for managing AI risks are undocumented—or they do not exist at all. And 24 percent of those with documented policies deem them only “somewhat effective.”

The lack of negative consequences to date may be partially responsible for the current blind spots on AI. Nearly 4 in 10 respondents (38 percent) believe their company’s Board of Directors is not fully aware of how AI is being used across the organization. Eighty-three percent say their organization has not been subject to any AI-related litigation, inquiry or enforcement action.

“AI impacts our most important life functions—things like obtaining a loan, getting hired or being promoted. Make no mistake, enforcement and civil litigation related to corporate AI usage are coming,” said Newman. “Now is the time for companies to take a more holistic approach to AI oversight to ensure it is fair, transparent and unbiased. Those who wait may find out too late that expensive missteps could have been avoided with proper guardrails and guidance in place.”

Download the full report here.

The telephone- and email-based survey was conducted during the months of December 2021 and January 2022, with executives at companies with at least $10.3 billion in annual revenues on average, across a range of industries.