Along with powerful technology like AI comes powerful threats—especially with the haphazard manner that many companies rushed to deployment without guidelines or even real knowledge of the risks they were taking. New research from AI models and assets security provider HiddenLayer highlights the pervasive use of AI and the risks involved in its deployment.

The firm’s inaugural AI Threat Landscape report reveals that virtually all surveyed companies (98 percent) consider at least some of their AI models crucial to their business success—and 77 percent identified breaches to their AI in the past year. Yet only 14 percent of IT leaders said their respective companies are planning and testing for adversarial attacks on AI models—showcasing this all-too-pervasive flippant and potentially dangerous AI attitude.

The research uncovers AI’s widespread utilization by today’s businesses as companies have, on average, a staggering 1,689 AI models in production. In response, security for AI has become a priority, with 94 percent of IT leaders allocating budgets to secure their AI in 2024. Yet only 61 percent are highly confident in their allocation, and 92 percent are still developing a comprehensive plan for this emerging threat. These findings reveal the need for support in implementing security for AI.

“AI is the most vulnerable technology ever to be deployed in production systems,” said Chris “Tito” Sestito, co-founder and CEO of HiddenLayer, in a news release. “The rapid emergence of AI has resulted in an unprecedented technological revolution, of which every organization in the world is affected. Our first-ever AI Threat Landscape report reveals the breadth of risks to the world’s most important technology. HiddenLayer is proud to be on the front lines of research and guidance around these threats to help organizations navigate the security for AI landscape.”

Risks involved with AI use

Adversaries can leverage a variety of methods to utilize AI to their advantage. The most common risks of AI usage include:

- Manipulation to give biased, inaccurate, or harmful information.

- Creation of harmful content, such as malware, phishing, and propaganda.

- Development of deep fake images, audio, and video.

- Leveraged by malicious actors to provide access to dangerous or illegal information.

Common types of attacks on AI

There are three major types of attacks on AI:

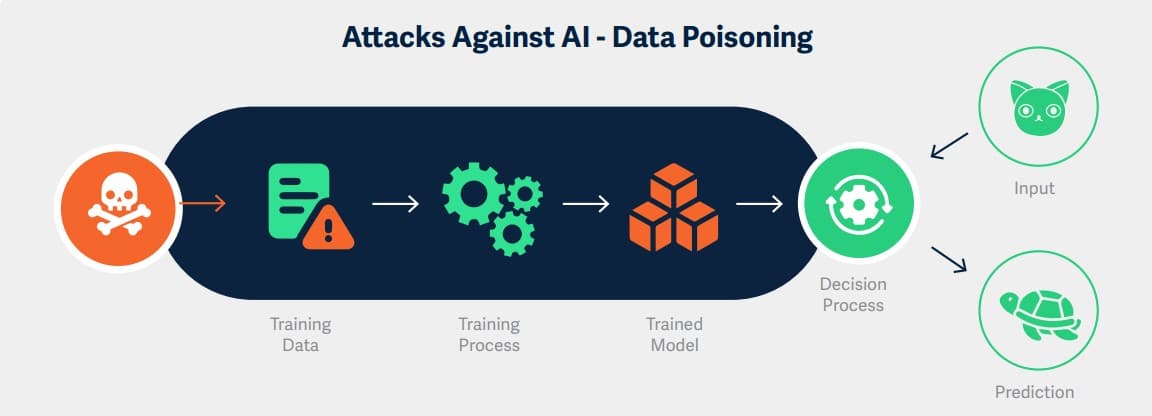

- Adversarial machine learning attacks: These target AI algorithms, aimed to alter AI’s behavior, evade AI-based detection, or steal the underlying technology.

- Generative AI system attacks: These threaten AI’s filters and restrictions, intended to generate content deemed harmful or illegal.

- Supply chain attacks: These attack ML artifacts and platforms with the intention of arbitrary code execution and delivery of traditional malware.

Challenges to securing AI

While industries are reaping the benefits of increased efficiency and innovation thanks to AI, many organizations do not have proper security measures in place to ensure safe use. Some of the biggest challenges reported by organizations in securing their AI include:

- Shadow IT: 61 percent of IT leaders acknowledge shadow AI, solutions that are not officially known or under the control of the IT department, as a problem within their organizations.

- Third-party AIs: 89 percent express concern about security vulnerabilities associated with integrating third-party AIs, and 75 percent believe third-party AI integrations pose a greater risk than existing threats.

Best practices for securing AI

The researchers outlined recommendations for organizations to begin securing their AI, including:

- Discovery and asset management: Begin by identifying where AI is already used in your organization. What applications has your organization already purchased that use AI or have AI-enabled features?

- Risk assessment and threat modeling: Perform threat modeling to understand the potential vulnerabilities and attack vectors that could be exploited by malicious actors to complete your understanding of your organization’s AI risk exposure.

- Data security and privacy: Go beyond the typical implementation of encryption, access controls, and secure data storage practices to protect your AI model data. Evaluate and implement security solutions that are purpose-built to provide runtime protection for AI models.

- Model robustness and validation: Regularly assess the robustness of AI models against adversarial attacks. This involves pen-testing the model’s response to various attacks, such as intentionally manipulated inputs.

- Secure development practices: Incorporate security into your AI development lifecycle. Train your data scientists, data engineers, and developers on the various attack vectors associated with AI.

- Continuous monitoring and incident response: Implement continuous monitoring mechanisms to detect anomalies and potential security incidents in real-time for your AI, and develop a robust AI incident response plan to quickly and effectively address security breaches or anomalies.

Watch the firm’s webinar further exploring the findings:

Download the full report here.

The report surveyed 150 IT security and data science leaders to shed light on the biggest vulnerabilities impacting AI today, their implications on commercial and federal organizations, and cutting-edge advancements in security controls for AI in all its forms.