Even before AI gobbled up business leaders’ priority lists, data security and privacy were pressing problems for many brands and companies, and the wunderkind new tech has only made privacy shortfalls more dangerous—and consumers are paying attention.

A new national poll from data trust firm Ketch and privacy protection thinktank The Ethical Tech Project examines both awareness and usage of AI among consumers, their expectations about data privacy, and the business value of featuring ethical data use as a consumer choice. Results of the research affirm that the market is ready for AI use cases, given that 68 percent of US adults are concerned but excited to see new and creative AI technology emerge. However, they want it to be done right.

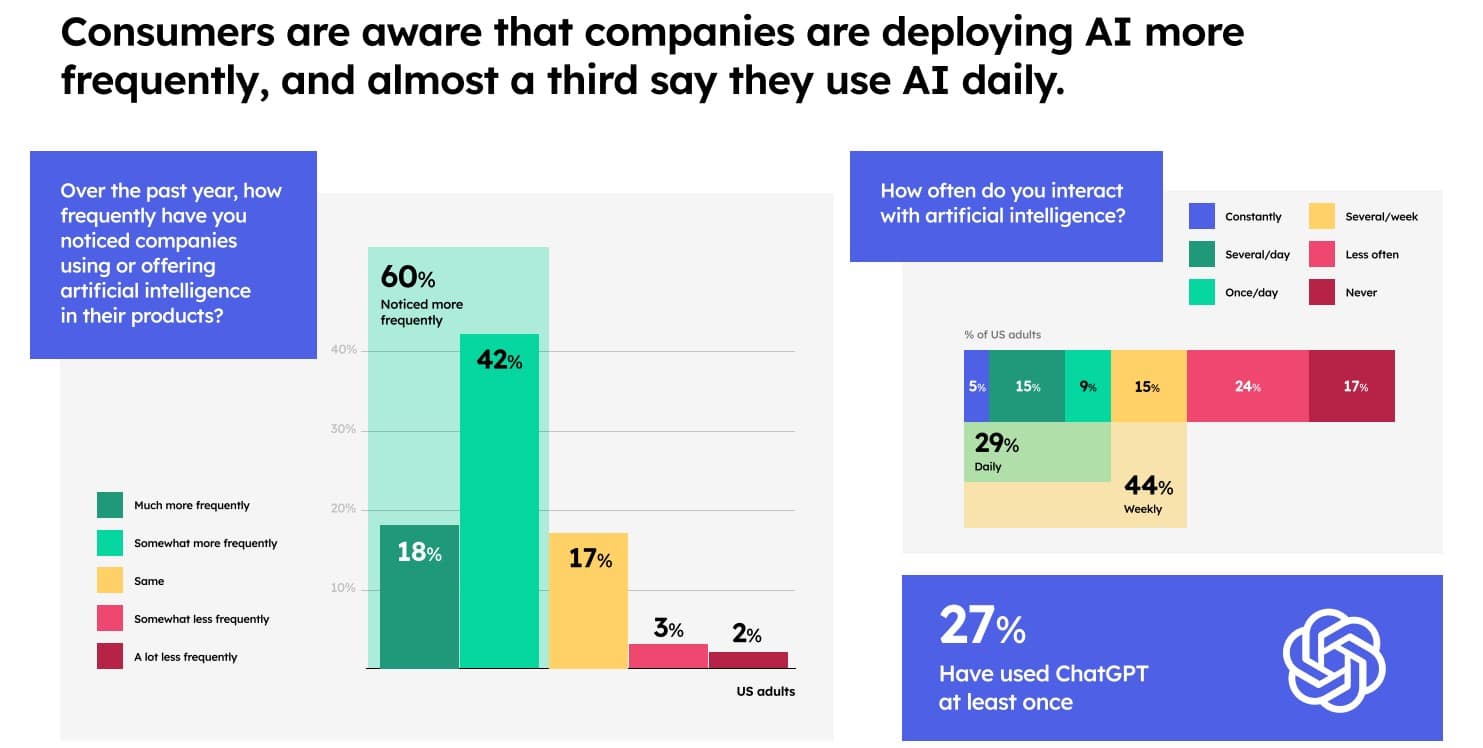

The impetus for this study, based on a survey conducted by Slingshot Strategies, was to truly understand how people value data privacy in the context of AI—an industry that is set to explode by $1.3T over the next 10 years. In March 2023, only 14 percent of U.S. adults had tried ChatGPT. However, the new study found that 27 percent of respondents said they had used ChatGPT at least once—doubling the exposure and usage of adults in the past six months.

Almost a third of US adults see the benefits and harms of the technology as evenly split

Consumers support many AI use cases that are already adopted in the market, such as translating languages or fraud detection, but consumers start to get concerned when AI takes on more responsibility for newer use cases, such as self-driving cars or replacing certain jobs.

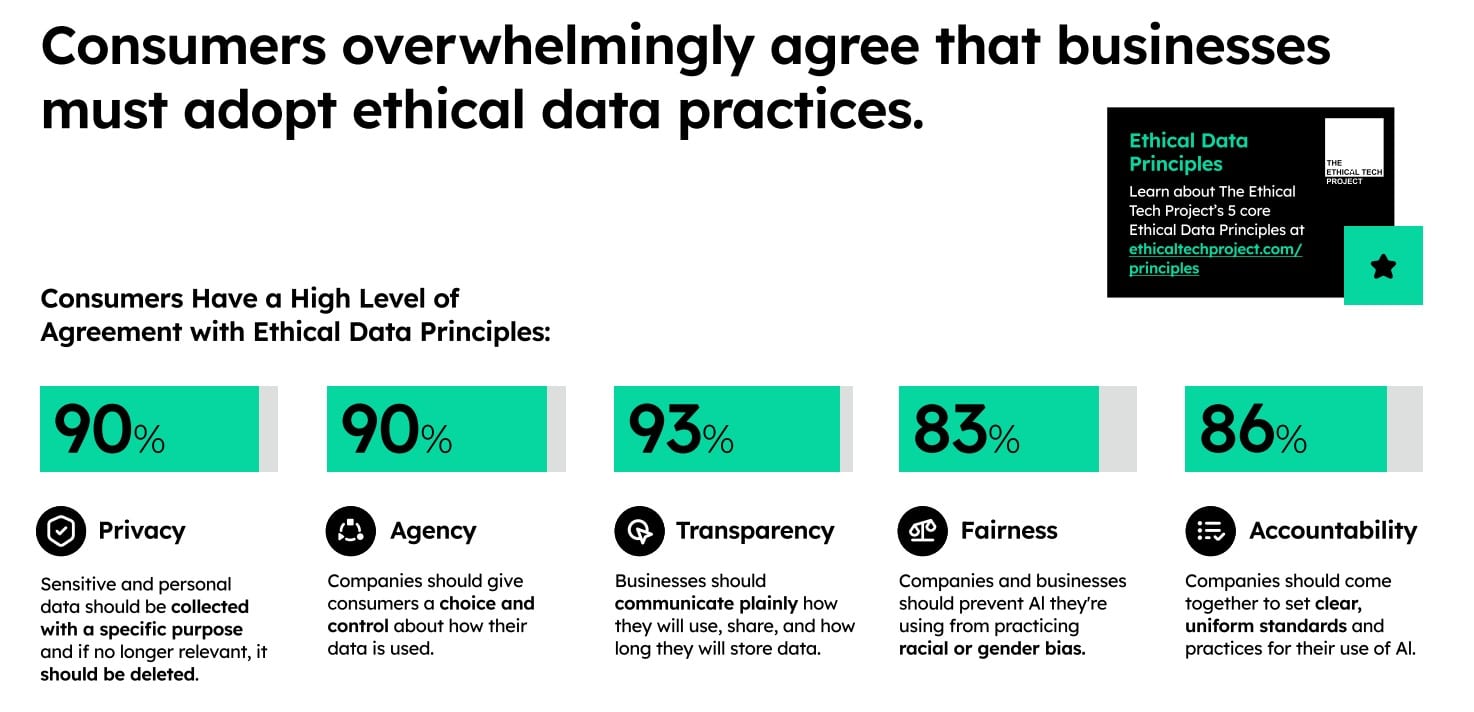

Based on these findings, it makes sense that consumers were also found to overwhelmingly agree that businesses must adopt ethical data practices in the age of AI. Specifically, consumers see choice and control over data as a fundamental right, not a bonus feature. Second, consumers recognize AI’s hidden reach and they demand clear, upfront communication. Lastly, acknowledging AI’s inherent bias potential, consumers are advocating for a focus on corporate responsibility.

“Business leaders need to prioritize the ethical use of data as a competitive priority, particularly as AI changes their day-to-day operations,” said Tom Chavez, founder and chair of the Ethical Tech Project, in a news release. “Instead of leaders prognosticating whether consumers care about these issues or not, the genie is out of the bottle: data privacy in the era of AI is no longer a nice-to-have, it’s a need-to-have.”

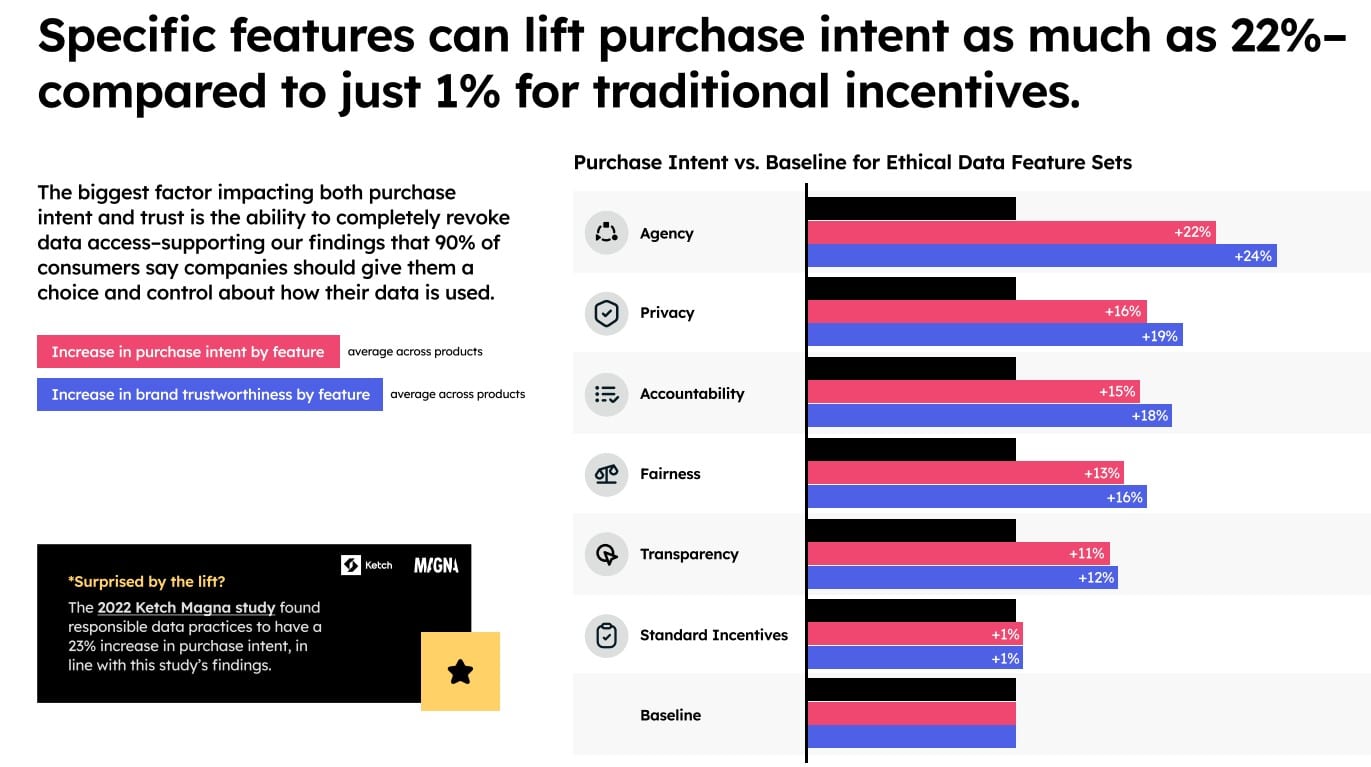

Research analysis focuses on purchase-intent metrics

To elicit preferences from consumers who were on the fence about businesses adopting ethical data practices, a conjoint analysis was performed—a way to quantify the impact of responsible data practices on purchase intent and brand preference. The presence of the best-testing data-privacy measure—the ability for consumers to revoke data access at any time—lifted purchase intent by 22 percent above baseline and lifted brand trustworthiness by 23 percent.

Short-term privacy features such as appropriate data retention periods, accountability measures like regulatory oversight, agency measures like control of data and data destruction, fairness features like optionality of whether data can be used to train AI, and transparency around data usage also produced double-digit improvements in purchase intent and brand trustworthiness above baseline.

“Data stewardship is directly linked to revenue and top-line growth—especially as AI heightens consumer anxiety around how AI models use their data from a variety of places,” said Jonathan Joseph, head of solutions and marketing at Ketch, in the release. “If this doesn’t motivate business leaders to take data privacy seriously—then I’m not sure what will.”

Slingshot Strategies conducted the survey of 2,500 US Adults drawn from Dynata’s national consumer panel. The margin of error is ±1.96 percent. The survey was conducted September 7-11, 2023. Slingshot conducted a choice-based conjoint analysis to measure the increase in preference for consumer product options reflecting stark differences in data privacy, as well as MaxDiff analysis where respondents can rank various data privacy enhancements.