The Cambridge Analytica scandal has brought the data crisis squarely in the public headlights, but the crisis of trust facing digital platforms such as Facebook, Google and Twitter was a decade or more in the making—caused by the companies’ unwillingness to take responsibility for the content that appears on their sites, putting profits over trust and a legalistic approach to avoid accountability, according to new research from the Digital Citizens Alliance.

The org’s report, Digital Platforms in Crisis: Ten Years in the Making, comes out as Facebook struggles to contain the crisis surrounding its role in the misuse of users’ personal information, the rising outrage over inappropriate content on digital platforms and continued struggle to curb divisive and misleading political information.

Digital Citizens called on the platforms to acknowledge that the aggressively hands-off approach they took to policing content made it easy for criminals and other bad actors to operate. The group calls on the platforms to hire more monitors, create a system to identify and share information about bad actors, de-list or demote websites offering illicit goods and services and collaborate to create uniform basic privacy settings easily understood by users.

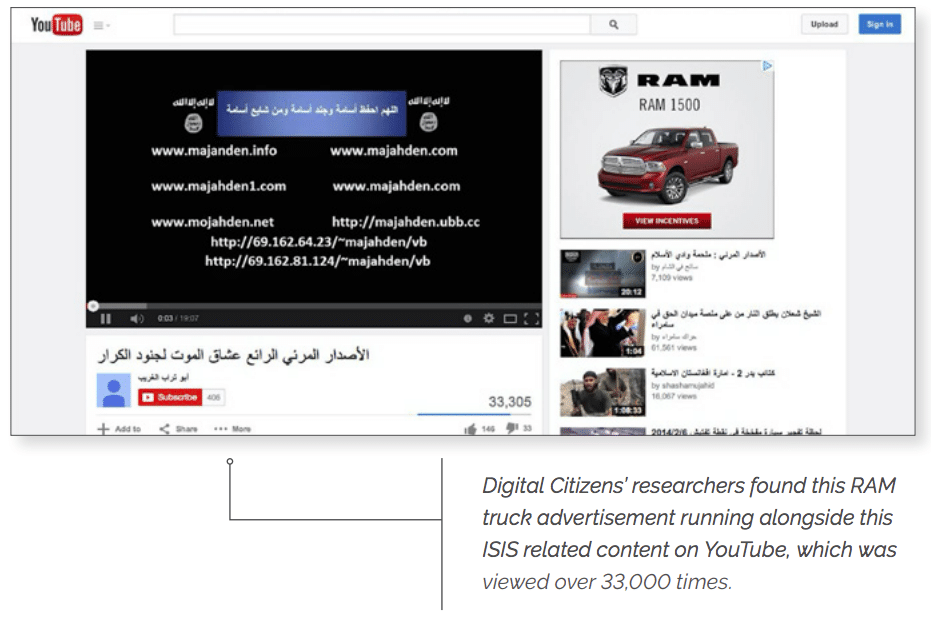

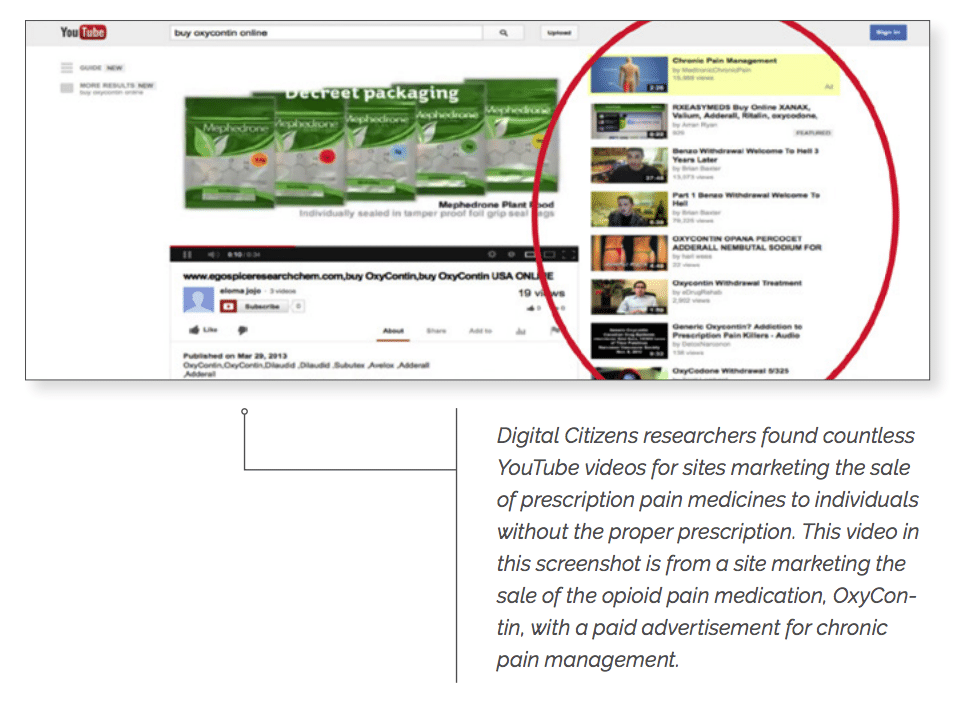

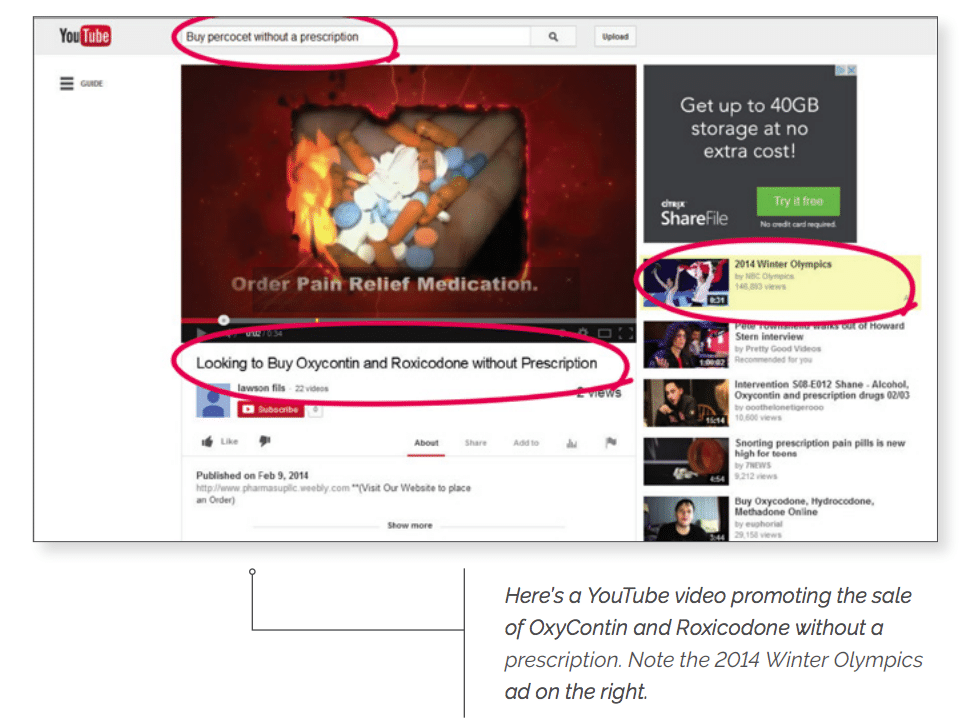

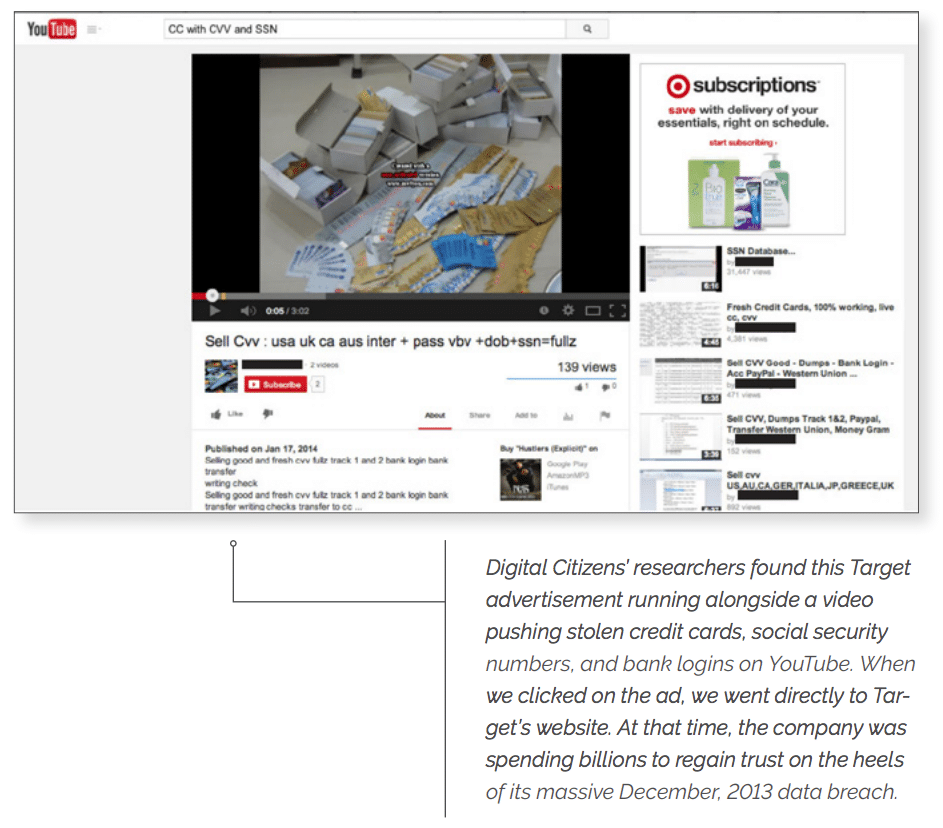

Revelations over the last two years of Russian efforts to manipulate the 2016 election, the misuse of users’ personal information, objectionable content such as the spread of terrorist Jihadi videos has taken a toll on user trust in the platforms.

According to a Digital Citizens survey, a majority of Americans (51 percent) now say that Facebook, Google, and Twitter are not responsible companies “because they put making profits most of the time ahead of trying to do the right thing.” And the number of Americans calling to regulate the platforms has increased from 35 percent to just over half (50 percent) in a month.

“For years Google, Facebook, Twitter and other platforms have been urged to take a greater responsibility for their actions, including the content that appears on their sites,” said Tom Galvin, executive director of the Digital Citizens Alliance, in a news release. “They largely ignored those warnings because there was too much money at stake. Criminals and bad actors noticed. Hopefully, as platforms now face a crisis in trust they will at last heed the warnings and take responsibility.”

The report chronicles how digital platforms have resisted taking greater responsibility for what occurs on their platforms. For example, Google ignored calls over the last five years to take a more responsible approach to illegal and inappropriate content. For years, Google’s stock response was that it would remove content when it was flagged by users. But Americans seem to reject that approach: 65 percent said that companies such as Google should take a more active role in monitoring and taking down inappropriate content on their own instead of relying on users to flag it.

Eighteen months after allegations it was manipulated to try to influence the 2016 election, Facebook continues to face criticism about the proliferation of fake and divisive content. But 58 percent of Americans said Facebook has been unsuccessful in cleaning up its platform.

Overall, 54 percent said that companies such as Facebook, Google, and Twitter brought their recent problems on themselves by not doing a good enough job policing their content. That’s compared to 26 percent who said the problems were outside their control.

The report lays out a path forward to regain trust and create safe digital neighborhoods:

- Hiring of a more diverse multi-cultural workforce dedicated to identifying inappropriate content and illegal activities and then removing them. Digital Citizens has long noted that Google’s technology enables it to place relevant ads even on inappropriate content. That algorithm could be deployed to flag suspicious content for inspection.

- Establishment of a cross-platform initiative to identify and ban bad actors. Digital Citizens has long advocated for while acknowledging it will pose technical and legal challenges. This could include analyzing usage data that they already collect to highlight behavior that is anomalous and suggests illicit, unlawful or illegal conduct.

- Platforms could create digital fingerprints of unlawful conduct that are shared across platforms to proactively block such conduct, as is done with child pornography. There is also the model used by casinos to identify cheats and share that information globally. Digital platforms would then have the capability to make decisions whether to de-list or demote websites offering illicit goods and services, and the ability to stop the spread of illegal behavior that victimizes its users.

- Digital platforms should collaborate to create uniform basic privacy settings that are easily understood by users. Internet users are generally at a loss at how their information is collected and disseminated.

“Regaining trust must start with an honest conversation with the American people about how these platforms have been used and misused,” said Galvin. “I hope it happens because these platforms are part of fabric of society and we need them to be trusted.”

Read the complete report here.

The survey of 1,020 Americans included in the “Digital Platforms in Crisis” report was conducted by Survey Monkey from March 24-March 30 and has a margin of error of +/- 3 percent.