It should come as no surprise that AI can be a scamming goldmine for malicious e-predators, and businesses and consumers alike can easily fall prey to these evolving threats. New research from passwordless, phishing-resistant MFA provider Beyond Identity explores the diverse methods hackers are now employing to breach systems, steal sensitive information and automate complex processes with the help of generative AI technology.

The firm’s new survey of 1,000+ Americans demonstrates exactly how convincing ChatGPT scams can be, and offers insights on what consumers and businesses can do to protect themselves from falling victim to fraudulent messages, unsafe applications and password theft.

The survey respondents were asked to review different schemes and express whether they’d be susceptible—and if not, to identify the factors that aroused suspicion. Notably, 39 percent said they would fall victim to at least one of the phishing messages in the survey, 49 percent would be tricked into downloading a fake ChatGPT app and 13 percent have used AI to generate passwords.

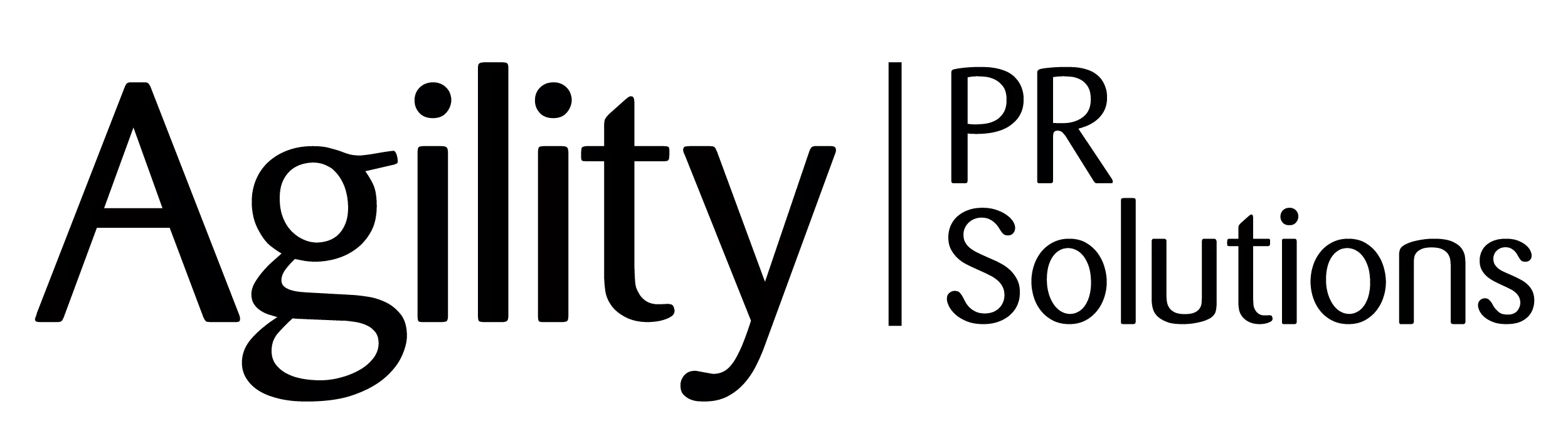

As part of the survey, ChatGPT drafted phishing emails, texts and posts and respondents were asked to identify which were believable. Of the 39 percent that said they would fall victim to at least one of the options, a social media post scam (21 percent) and text message scam (15 percent) were most common. For those wary of all the messages, the top giveaways were suspicious links, strange requests and unusual amounts of money being requested.

“With adversaries using AI, the level of difficulty for attackers will be markedly reduced. While writing well-crafted phishing emails is a first step, we fully expect hackers to use AI across all phases of the cybersecurity kill chain,” said Jasson Casey, CTO of Beyond Identity, in a news release. “Organizations building apps for their customers or protecting the internal systems used by their workforce and partners will need to take proactive, concrete measures to protect data—such as implementing passwordless, phish-resistant multi-factor authentication (MFA), modern Endpoint Detection and Response (EDR) software and zero trust principles.”

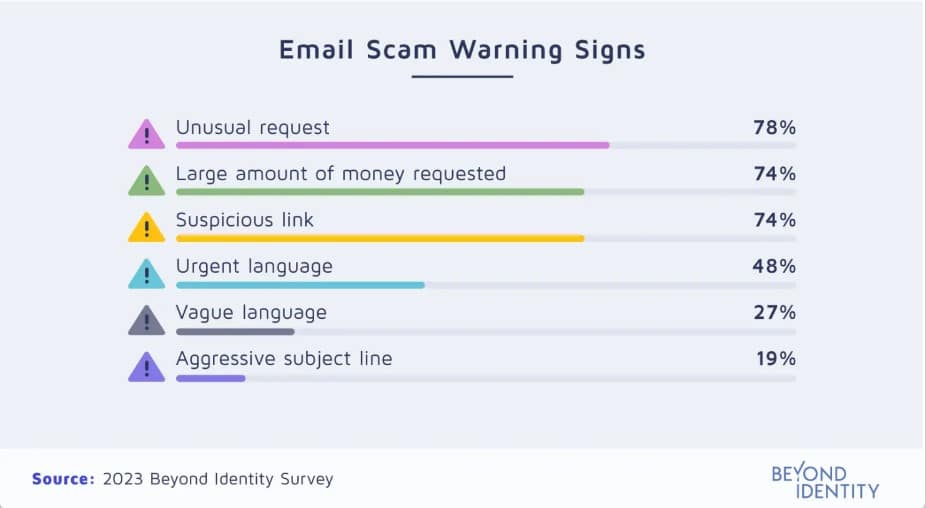

Although 93 percent of respondents had not experienced having their information stolen from an unsafe app in real life, 49 percent were fooled when trying to identify the real ChatGPT app out of six real but copycat options. Interestingly, those who had fallen victim to app fraud in the past were much more likely to do so again.

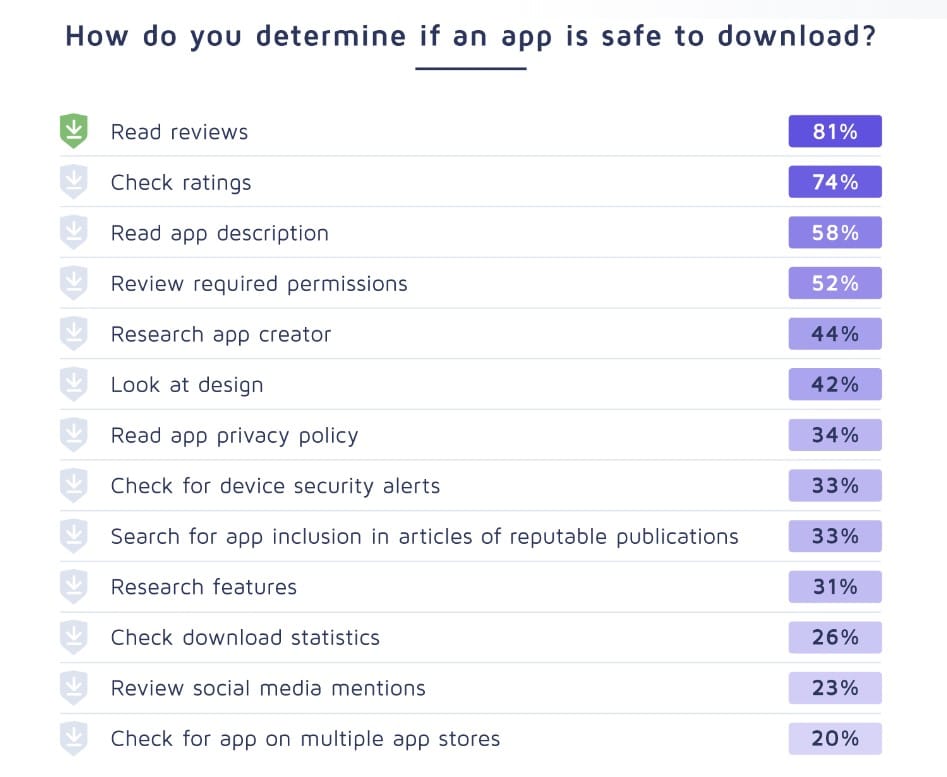

The survey also explored how ChatGPT can be leveraged by hackers for social engineering purposes. For instance, ChatGPT can use easy-to-find personal information to generate lists of probable passwords to attempt to breach accounts. This is a problem for the one in four respondents who use personal information in their passwords, like birth dates (35 percent) or pet names (34 percent) that can be readily found on social media, business profiles and phone listings.

While longer passwords with random characters and no personal information may seem like the best way to combat this malicious AI capability, the report is clear: any and all passwords are a critical vulnerability for organizations since bad actors will find other, easier ways into accounts—making passwordless and phish-resistant MFA an absolute necessity.